Richard Dawkins is making waves with an essay in which he claims that Claude, the LLM developed by Anthropic, is conscious. I personally doubt it, but I think the topic merits a thread. The essay is behind a paywall, but I’ve paid the $3 to access it. That gets you a three-month introductory subscription to Unherd magazine, which hosts the essay, with the option to cancel before they start charging the $7 monthly fee.

Category Archives: Information Theory

Recap redux

- Part 1: Stark Incompetence

- Part 2: The Old Switcheroo

- Part 3: A Nontechnical Recap

- Part 4: Recap Redux

Eric Holloway has returned to The Skeptical Zone, following a long absence. He expects to get responses to his potshot at phylogenetic inference, though he has never answered three questions of mine about his own work on algorithmic specified complexity. Here I abbreviate and clarify the recap I previously posted, and introduce remarks on the questions.

If there is a fundamental flaw in the second half, as you claim, then I’ll retract it if it is unfixable.

— Eric Holloway, January 2, 2020

A nontechnical recap

- Part 1: Stark Incompetence

- Part 2: The Old Switcheroo

- Part 3: A Nontechnical Recap

I recommend that you read “Recap Redux” instead of this post.

In Section 4 of their article, Nemati and Holloway claim to have identified an error in a post of mine. They do not cite the post, but instead name me, and link to the homepage of The Skeptical Zone. Thus there can be no question as to whether the authors regard technical material that I post here as worthy of a response in Bio-Complexity. (A year earlier, George Montañez modified a Bio-Complexity article, adding information that I supplied in precisely the post that Nemati and Holloway address.) Interacting with me at TSZ, a month ago, Eric Holloway acknowledged error in an equation that I had told him was wrong, expressed interest in seeing the next part of my review, and said, “If there is a fundamental flaw in the second half, as you claim, then I’ll retract it if it is unfixable.” I subsequently put a great deal of work into “The Old Switcheroo,” trying to anticipate all of the ways in which Holloway might wiggle out of acknowledging his errors. Evidently I left him no avenue of escape, given that he now refuses to engage at all, and insists that I submit my criticisms to Bio-Complexity.

Continue reading

Questions for Eric Holloway…

… in regard to his article “Expected Algorithmic Specified Complexity”

Question 1. Is your definition of algorithmic specified complexity precisely equivalent to the definition given by Ewert, Dembski, and Marks in “Algorithmic Specified Complexity,”

(A) ![]()

even though you write ![]() in place of

in place of ![]() ?

?

Continue reading

The old switcheroo

Eric Holloway has littered cyberspace with claims, arrived at by faulty reasoning, that the “laws of information conservation (nongrowth)” in data processing hold for algorithmic specified complexity as for algorithmic mutual information. It is essential to understand that there are infinitely many measures of algorithmic specified complexity. Nemati and Holloway would have us believe that each of them is a quantification of the meaningful information in binary strings, i.e., finite sequences of 0s and 1s. If Holloway’s claims were correct, then there would be a limit on the increase in algorithmic specified complexity resulting from execution of a computer program (itself a string). Whichever one of the measures were applied, the difference in algorithmic specified complexity of the string output by the process and the string input to the process would be at most the program length plus a constant. It would follow, more generally, that an approximate upper bound on the difference in algorithmic specified complexity of strings ![]() and

and ![]() is the length of the shortest program that outputs

is the length of the shortest program that outputs ![]() on input of

on input of ![]() Of course, the measure must be the same for both strings. Otherwise it would be absurd to speak of (non-)conservation of a quantity of algorithmic specified complexity.

Of course, the measure must be the same for both strings. Otherwise it would be absurd to speak of (non-)conservation of a quantity of algorithmic specified complexity.

Continue reading

Of “models” and “algorithms”

I was short with Joe Felsenstein in the comments section of “Stark Incompetence,” a post in which I address, well, um, the stark incompetence on display in a recent publication of Eric Holloway. I have apologized to Joe, and promised to make amends with a brief post on the topic that he wants to address. Now, the topic is a putative model that Eric introduced in “Mutual Algorithmic Information, Information Non-growth, and Allele Frequency” (or perhaps an improved version of the model). Here is a remark that I addressed to Joe:

Tom English: As you know, if a putative model is logically inconsistent, then it is not a model of anything. I claim that that EricMH’s putative model is logically inconsistent. You had better prove that it is consistent, or turn it into something that you can prove is consistent, before going on to discuss its biological relevance.

I will not have to go far into Eric’s post to identify inconsistencies. After explaining the inconsistencies, which I doubt can be eliminated, I will remark on why the “model” is not worth salvaging. The gist is that Eric’s attempted analysis puts a halting, output-generating simulator of a non-halting, non-output-generating evolutionary process in place of the process itself. An analysis of the simulator would not, in any case, be an analysis of the simuland.

Non-conservation of algorithmic specified complexity…

… proved without reference to infinity and the empty string.

Some readers have objected to my simple proof that computable transformation ![]() of a binary string

of a binary string ![]() can result in an infinite increase of algorithmic specified complexity (ASC). Here I give a less-simple proof that there is no upper bound on the difference in ASC of

can result in an infinite increase of algorithmic specified complexity (ASC). Here I give a less-simple proof that there is no upper bound on the difference in ASC of ![]() and

and ![]() To put it more correctly, I show that the difference can be any positive real number.

To put it more correctly, I show that the difference can be any positive real number.

Updated 12/8/2019: The assumptions of my theorem were unnecessarily restrictive. I have relaxed the assumptions, without changing the proof.

Continue reading

Evo-Info 4 addendum

Subjects: Evolutionary computation. Information technology–Mathematics.

In “Evo-Info 4: Non-Conservation of Algorithmic Specified Complexity,” I neglected to explain that algorithmic mutual information is essentially a special case of algorithmic specified complexity. This leads immediately to two important points:

- Marks et al. claim that algorithmic specified complexity is a measure of meaning. If this is so, then algorithmic mutual information is also a measure of meaning. Yet no one working in the field of information theory has ever regarded it as such. Thus Marks et al. bear the burden of explaining how they have gotten the interpretation of algorithmic mutual information right, and how everyone else has gotten it wrong.

- It should not come as a shock that the “law of information conservation (nongrowth)” for algorithmic mutual information, a special case of algorithmic specified complexity, does not hold for algorithmic specified complexity in general.

My formal demonstration of unbounded growth of algorithmic specified complexity (ASC) in data processing also serves to counter the notion that ASC is a measure of meaning. I did not explain this in Evo-Info 4, and will do so here, suppressing as much mathematical detail as I can. You need to know that a binary string is a finite sequence of 0s and 1s, and that the empty (length-zero) string is denoted ![]() The particular data processing that I considered was erasure: on input of any binary string

The particular data processing that I considered was erasure: on input of any binary string ![]() the output

the output ![]() is the empty string. I chose erasure because it rather obviously does not make data more meaningful. However, part of the definition of ASC is an assignment of probabilities to all binary strings. The ASC of a binary string is infinite if and only if its probability is zero. If the empty string is assigned probability zero, and all other binary strings are assigned probabilities greater than zero, then the erasure of a nonempty binary string results in an infinite increase in ASC. In simplified notation, the growth in ASC is

is the empty string. I chose erasure because it rather obviously does not make data more meaningful. However, part of the definition of ASC is an assignment of probabilities to all binary strings. The ASC of a binary string is infinite if and only if its probability is zero. If the empty string is assigned probability zero, and all other binary strings are assigned probabilities greater than zero, then the erasure of a nonempty binary string results in an infinite increase in ASC. In simplified notation, the growth in ASC is

![]()

for all nonempty binary strings ![]() Thus Marks et al. are telling us that erasure of data can produce an infinite increase in meaning.

Thus Marks et al. are telling us that erasure of data can produce an infinite increase in meaning.

Behe and Co. in Canada

This past Friday, I bumped into Dr. Michael Behe, and again on Saturday, along with Drs. Brian Miller (DI), Research Coordinator CSC, and Robert Larmer (UNB), currently President of the Canadian Society of (Evangelical) Christian Philosophers. Venue: local apologetics conference (https://www.diganddelve.ca/). The topic of the event “Science vs. Atheism: Is Modern Science Making Atheism Improbable?” makes it relevant here at TSZ, where there are more atheists & agnostics among ‘skeptics’ than average.

On the positive side, I would encourage folks who visit this site to go to such events for learning/teaching purposes. Whether for the ID speakers or not; good conversations are available among people honestly wrestling with and questioning the relationship between science, philosophy and theology/worldview, including on issues related to evolution, creation, and intelligence in the universe or on Earth. Don’t go to such events expecting miracles for your personal worldview in conversation with others, credibility in scientific publications or in the classroom, if you are using ‘science’ as a worldview weapon against ‘religion’ or ‘theology’. That argument just won’t fly anymore and the Discovery Institute, to their credit, has played a role, of whatever size may still be difficult to tell, in making this shift happen.

A question arises: what would be the first question you would ask or thing you would say to Michael Behe if you bumped into him on the street?

Continue readingNo cloning theorem: the double edge sword

Essentially, there are 2 types of cloning…

Biological cloning leading to clones such as identical twins who share exactly the same DNA.

Or artificial, genetically engineered cloning leading to such clones as plants whose DNA is also identical.

But there exists a more precise kind of cloning in physics that reaches all the way to the subatomic level of particles. Everything in the universe is made up of elementary quantum particles and the forces by which they interact, including DNA and us. This kind of cloning is more detailed because it involves the superposition of subatomic particles; their relative positions (particles can be in more than 1 position or state at the time), momenta and energy levels of every particle and all of their bonds and interactions are exactly the same in the copy (clone) as the original.

This kind of perfect cloning is impossible. It has been proven mathematically and formulated into the no cloning theorem, which states:

“In physics, the no-cloning theorem states that it is impossible to create an identical copy of an arbitrary unknown quantum state.”

How those two types of cloning apply to life systems, such as us, our DNA and so forth?

Continue readingID 3.0? The new Bradley Center at the DI – is Dembski returning from retirement?

Back in 2016, William Dembski officially ‘retired’ from ‘Intelligent Design’ theory & the IDM. He wrote that “the camaraderie I once experienced with colleagues and friends in the movement has largely dwindled.” https://billdembski.com/personal/official-retirement-from-intelligent-design/ This might have come rather late after Dembski’s star had already started to fade. Indeed, it was more than 10 years after the Dover trial debacle and already long after I personally heard another of the leaders of the IDM at the DI in 2003 say he no longer reads Dembski’s books. Yet no doubt Dr. Dembski was one of, if not the leading voice of the IDM for almost 2 decades. Here’s one UK IDist lamenting Dembski’s statement: https://designdisquisitions.wordpress.com/2017/02/19/william-dembski-moves-on-from-id-some-reflections/ Yet when a new paycheck from the Discovery Institute was offered in the Bradley Center, Dembski seems to have gotten right back on the ideological bandwagon in Seattle & reversed his dwindling of IDist camaraderie.

Is information the fundamental building block of life?

Paul Davies, cosmologists, physicist and agnostic, with Sara Imari Walker, proposed a theory that information, and not chemicals, is at the very foundation of life…Here

Why? Continue reading

Self-Assembly of Nano-Machines: No Intelligence Required?

In my research, I have recently come across the self-assembling proteins and molecular machines called nano-machines one of them being the bacterial flagellum…

Have you ever wondered what mechanism is involved in the self-assembly process?

I’m not even going to ask the question how the self-assembly process has supposedly evolved, because it would be offensive to engineers who struggle to design assembly lines that require the assembly, operation and supervision of intelligence… So far engineers can’t even dream of designing self-assembling machines…But when they do accomplish that one day, it will be used as proof that random, natural processes could have done too…in life systems.. lol

If you don’t know what I’m talking about, just watch this video:

Evo-Info 4: Non-conservation of algorithmic specified complexity

Subjects: Evolutionary computation. Information technology–Mathematics.

The greatest story ever told by activists in the intelligent design (ID) socio-political movement was that William Dembski had proved the Law of Conservation of Information, where the information was of a kind called specified complexity. The fact of the matter is that Dembski did not supply a proof, but instead sketched an ostensible proof, in No Free Lunch: Why Specified Complexity Cannot Be Purchased without Intelligence (2002). He did not go on to publish the proof elsewhere, and the reason is obvious in hindsight: he never had a proof. In “Specification: The Pattern that Signifies Intelligence” (2005), Dembski instead radically altered his definition of specified complexity, and said nothing about conservation. In “Life’s Conservation Law: Why Darwinian Evolution Cannot Create Biological Information” (2010; preprint 2008), Dembski and Marks attached the term Law of Conservation of Information to claims about a newly defined quantity, active information, and gave no indication that Dembski had used the term previously. In Introduction to Evolutionary Informatics, Marks, Dembski, and Ewert address specified complexity only in an isolated chapter, “Measuring Meaning: Algorithmic Specified Complexity,” and do not claim that it is conserved. From the vantage of 2018, it is plain to see that Dembski erred in his claims about conservation of specified complexity, and later neglected to explain that he had abandoned them.

Does embryo development process require ID?

Jonathan Wells, who is an embryologist and an ID advocate, has a very interesting paper and video on the issue of ontogeny (embryo development) and the origins of information needed in the process of cell differentiation…

Wells thinks that a major piece of information needed in the process of embryo development can’t be explained by DNA, and therefore may require an intervention of an outside source of information, such as ID/God…

If you don’t want to watch the whole video, starting at about 40 min mark is just as good but especially at 43 min.

Does gpuccio’s argument that 500 bits of Functional Information implies Design work?

On Uncommon Descent, poster gpuccio has been discussing “functional information”. Most of gpuccio’s argument is a conventional “islands of function” argument. Not being very knowledgeable about biochemistry, I’ll happily leave that argument to others.

But I have been intrigued by gpuccio’s use of Functional Information, in particular gpuccio’s assertion that if we observe 500 bits of it, that this is a reliable indicator of Design, as here, about at the 11th sentence of point (a):

… the idea is that if we observe any object that exhibits complex functional information (for example, more than 500 bits of functional information ) for an explicitly defined function (whatever it is) we can safely infer design.

I wonder how this general method works. As far as I can see, it doesn’t work. There would be seem to be three possible ways of arguing for it, and in the end; two don’t work and one is just plain silly. Which of these is the basis for gpuccio’s statement? Let’s investigate …

Biological Information

- ‘Information’, ‘data’ and ‘media’ are distinct concepts. Media is the mechanical support for data and can be any material including DNA and RNA in biology. Data is the symbols that carry information and are stored and transmitted on the media. ACGT nucleotides forming strands of DNA are biologic data. Information is an entity that answers a question and is represented by data encoded on a particular media. Information is always created by an intelligent agent and used by the same or another intelligent agent. Interpreting the data to extract information requires a deciphering key such as a language. For example, proteins are made of amino acids selected based on a translation table (the deciphering key) from nucleotides.

Evolutionary Informatics catches modelers doing modeling

As Tom English and others have discussed previously, there was a book published last year called Introduction to Evolutionary Informatics, the authors of which are Marks, Dembski, and Ewert.

The main point of the book is stated as:

Indeed, all current models of evolution require information from an external designer to work.

(The “external designer” they are talking about is the modeler who created the model.)

Another way they state their position:

We show repeatedly that the proposed models all require inclusion of significant knowledge about the problem being solved.

Somehow, they think it needs to be shown that modelers put information and knowledge into their models. This displays a fundamental misunderstanding of models and modeling.

It is a simple fact that a model of any kind, in its entirety, comes from a modeler. Any information in the model, however one defines information, is put in the model by the modeler. All structures and behaviors of any model are results of modeling decisions made by the modeler. Models are the modelers’ conceptions of reality. It is expected that modelers will add the best information they think they have in order to make their models realistic. Why wouldn’t they? For people who actually build and use models, like engineers and scientists, the main issue is realism.

To see a good presentation on the fundamentals of modeling, I recommend the videos and handbooks available free online from the Society for Industrial and Applied Mathematics (SIAM.) “[Link]”.

For a good discussion on what it really means for a model to “work,” I recommend a paper called “Concepts of Model Verification and Validation”, which was put out by the Los Alamos Laboratories.

Prof. Marks gets lucky at Cracker Barrel

Subjects: Evolutionary computation. Information technology–Mathematics.

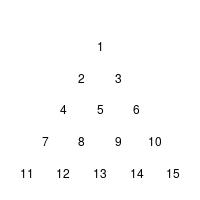

Yesterday, I looked again through “Introduction to Evolutionary Informatics”, when I spotted the Cracker Barrel puzzle in section 5.4.1.2 Endogenous information of the Cracker Barrel puzzle (p. 128). The rules of this variant of a triangular peg-solitaire are described in the text (or can be found at wikipedia’s article on the subject).

The humble authors1 then describe a simulation of the game to calculate how probable it is to solve the puzzle using moves at random:

Continue reading