Subjects: Evolutionary computation. Information technology–Mathematics.

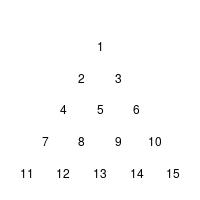

Yesterday, I looked again through “Introduction to Evolutionary Informatics”, when I spotted the Cracker Barrel puzzle in section 5.4.1.2 Endogenous information of the Cracker Barrel puzzle (p. 128). The rules of this variant of a triangular peg-solitaire are described in the text (or can be found at wikipedia’s article on the subject).

The humble authors1 then describe a simulation of the game to calculate how probable it is to solve the puzzle using moves at random:

A search typically requires initialization. For the Cracker Barrel puzzle, all of the 15 holes are filled with pegs and, at random, a single peg is removed. This starts the game. Using random initialization and random moves, simulation of four million games using a computer program resulted in an estimated win probability p = 0.0070 and an endogenous information of

Winning the puzzle using random moves with a randomly chosen initialization (the choice of the empty hole at the start of the game) is thus a bit more difficult than flipping a coin seven times and getting seven heads in a row

Naturally, I created such an simulation in R for myself: I encoded all thirty-six moves that could occur in a matrix cb.moves, each row indicating the jumping peck, the peck which is jumped over, and the place on which the peck lands.

And here is the little function which simulates a single random game:

cb.simul <- function(pos){# pos: boolean vector of length 15 indicating position of pecks

# a move is allowed if there is a peck at the start position & on the field which is

# jumped over, but not at the final position

allowed.moves <- pos[cb.moves[,1]] & pos[cb.moves[,2]] & (!pos[cb.moves[,3]])

# if no move is allowed, return number of pecks left

if(sum(allowed.moves)==0) return(sum(pos))# otherwise, choose an allowed move at random

number.of.move <- ((1:36)[allowed.moves])[sample(1:sum(allowed.moves),1)]pos[cb.moves[number.of.move,]] <- c(FALSE,FALSE,TRUE)

return(cb.simul(pos))

}

I run the simulation 4,000,000 times, changing the start position at random. But as a result, my estimated win probability was ![]() – only two thirds of the number in the text. How could this be? Why were Prof. Marks et.al. so much luckier than I? I re-run the simulation, checked the code, washed, rinsed, repeated: no fundamental change.

– only two thirds of the number in the text. How could this be? Why were Prof. Marks et.al. so much luckier than I? I re-run the simulation, checked the code, washed, rinsed, repeated: no fundamental change.

So, I decided to take a look at all possible games and on the probability with which they occur. The result was this little routine:

cb.eval <-

function(pos, prob){#pos: boolean vector of length 15 indicating position of pecks

#prob: the probability with which this state occurs

# a move is allowed if there is a peck at the start position & on the field which is

#jumped over, but not at the final position

allowed.moves <- pos[cb.moves[,1]] & pos[cb.moves[,2]] & (!pos[cb.moves[,3]])

if(sum(allowed.moves)==0){

#end of a game: prob now holds the probability that this game is played

nr.of.pecks <- sum(pos)

#number of remaining pecks

cb.number[nr.of.pecks] <<- cb.number[nr.of.pecks]+1

#the number of remaining pecks is stored in a global variable

cb.prob[nr.of.pecks] <<- cb.prob[nr.of.pecks] + prob

#the probability of this game is added to the appropriate place of the global variablereturn()

}

for(k in 1:sum(allowed.moves)){

#moves are still possible, for each move the next stage will be calculated

d <- pos

number.of.move <- ((1:36)[allowed.moves])[k]d[cb.moves[number.of.move,]] <- c(FALSE,FALSE,TRUE)

cb.eval(d,prob/sum(allowed.moves))

}

}

I now calculated the probabilities for solving the puzzle for each of the fifteen possible starting positions. The result was

![]()

This fits my simulation, but not the one of our esteemed and humble authors! What had happened?

An educated guess

I found it odd that the authors run 4,000,000 simulations – 1,000,000 or 10,000,000 seem to be more commonly used numbers. But when you look at the puzzle, you see that it was not necessary for me to look at all fifteen possible starting positions – whether the first peck is missing in position 1 or position 11 does not change the quality of the game: you could rotate the board and perform the same moves. Using symmetries, you find that there are only four essentially different starting positions. the black, red, and blue group with three positions each, and the green group with six positions. For each group, you get a different probability of success

| group | black | green | red | blue | prob. of choosing this group | .2 | .4 | .2 | .2 | prob. of success | .00686 | .00343 | .00709 | .001726 |

One quite obvious explanation for the result of the authors is that they did not run one simulation using a random starting position for 4,000,000 times, but simulated for each of the four groups the game 1,000,000 times. Unfortunately they either did not cumulate their results, but took only the one of the results of the black or the red group (or both), or they only thought they switched starting positions from one group of simulations to the next, but indeed always used the black or the red one.

Is it a big deal?

It is easily corrigible: instead of “For the Cracker Barrel puzzle, all of the 15 holes are filled with pegs and, at random, a single peg is removed.” they could write “For the Cracker Barrel puzzle, all of the 15 holes are filled with pegs and, one peck at the tip of the triangle is removed.”

If the book was actually used as a textbook, the simulation of the Cracker Barrel puzzle is an obvious exercise. I doubt that it is used that way anywhere, so no pupils were annoyed.

It is somewhat surprising that such an error occurs: it seems that the program was written by a single contributor and not checked. That seems to have been the case in previous publications, too.

Perhaps the authors thought that the program was too simple to be worthy of their full attention – and the more complicated stuff is properly vetted. OTOH, it could be a pattern….

Well, it will certainly be changed in the next edition.

(Cross-posted from here)

1. p.13, 118, 142, and 173↩

Tom English,

Great – looking at states instead of games really speeds things up!

What’s funny is that Marks et al. make weird remarks about dynamic programming and the “curse of dimensionality” (as Bellman, the originator of DP, called it) in their book, but clearly did not recognize when to apply it.

I commonly treat little problems like this as opportunities to gain experience in array processing with Numeric Python. What I did, to go from the 700 ms I got with my first implementation to 135 ms (the 150 ms run includes a check to see whether the association of states with counts/probabilities is closed under rotation of states) was to remove some loops from my Python code by introducing some operations on arrays. To do some of the array operations, I had to learn about explicit broadcasting of operands. What I’ve got looks clunky, and I think there’s still something I don’t understand. That’s the main reason I haven’t posted my code yet.

p. 130

p. 138

The best part: both values are wrong

I emailed Dr. Dr. Dembski, Dr. Marks, and Dr. Ewert about the error in their calculation a month ago: I got no response.

I wrote a short review of “An Introduction to Evolutionary Informatics” at amazon.com: Still waiting for the ultimate book on Intelligent Design