Alexander Lerchner, a researcher at Google’s DeepMind division, posted a preprint article a couple of months ago titled “The Abstraction Fallacy: Why AI Can Simulate But Not Instantiate Consciousness”, in which he makes the strong claim that computationally based AI will never be conscious. Here’s the abstract:

Continue reading

Category Archives: Probability and statistics

AI image generation via diffusion models

I’ve been experimenting with AI image generation and learning how it works under the hood, and it strikes me as being worthy of an OP. Writing about it will solidify my understanding, and I figure I might as well share what I’ve learned with others. I’ll start with a high-level view and then fill in the details as the thread progresses.

Genetic Drift for dummies

Stark incompetence

Let us start by examining a part of the article that everyone can see is horrendous. When I supply proofs, in a future post, that other parts of the article are wrong, few of you will follow the details. But even the mathematically uninclined should understand, after reading what follows, that

- the authors of a grotesque mangling of lower-level mathematics are unlikely to get higher-level mathematics correct, and

- the reviewers and editors who approved the mangling are unlikely to have given the rest of the article adequate scrutiny.

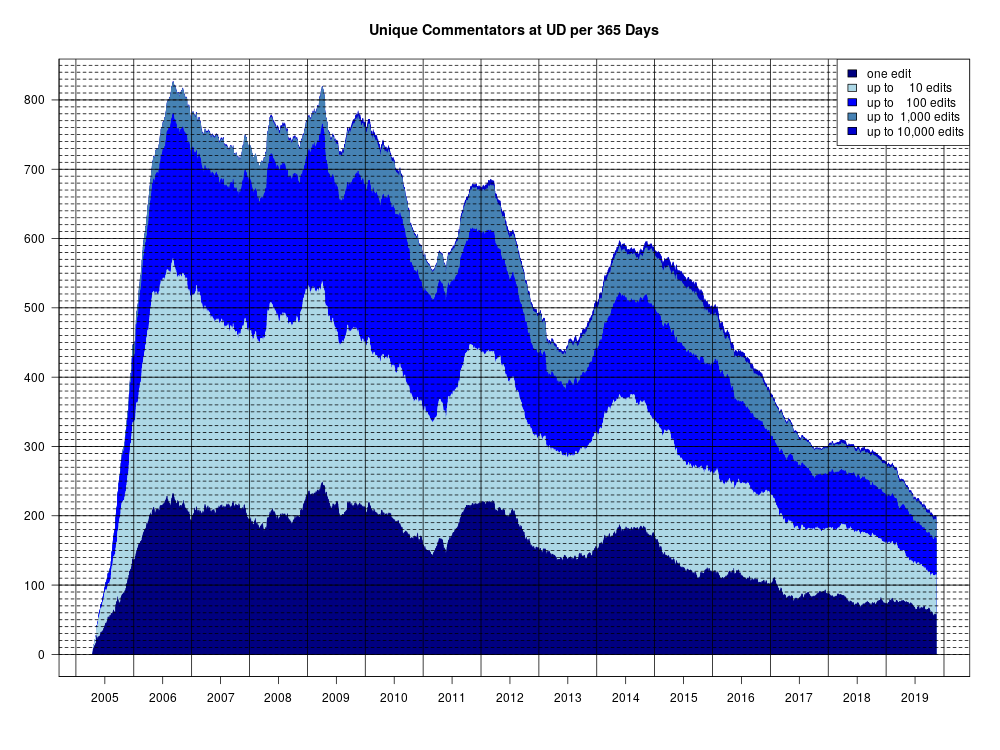

Number of Unique Commentators

For The Panda’s Thumb’s After the Bar Closes thread on Uncommon Descent, I created a graph of the number of unique commentators at UD:

Here, for each day from Apr 2005 until Nov 2019, I gave the number of different people who commented at UD at least once during the previous 365 days. The colors indicate the number of contributions such a commentator has made over this period of time.

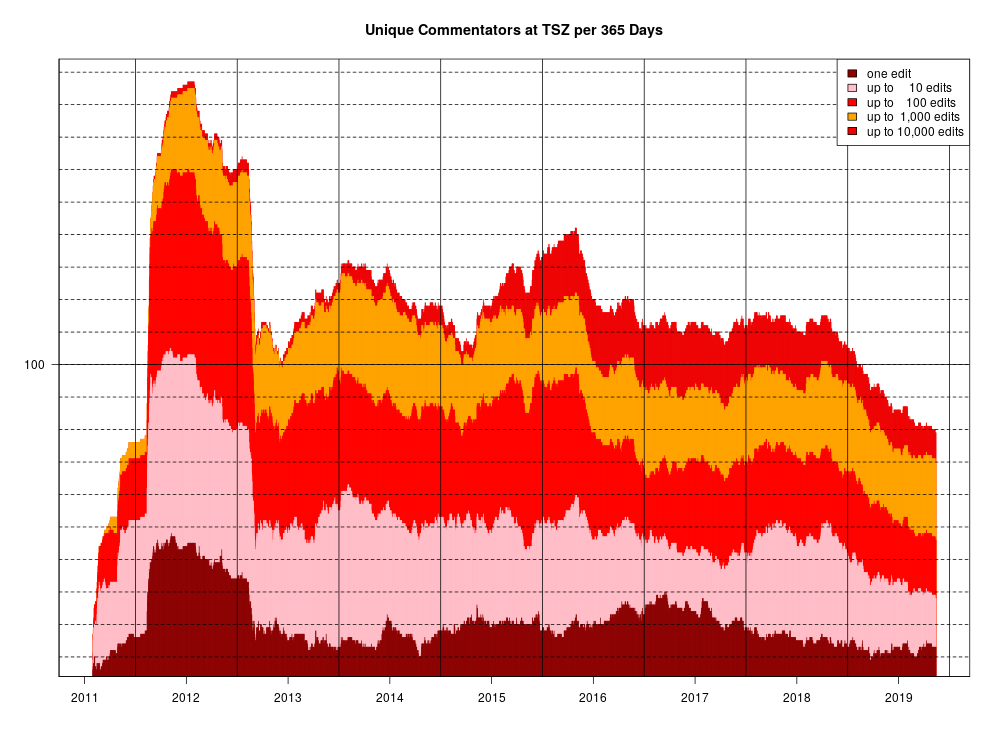

Obviously, the same can be done for “The Skeptical Zone”:

Enjoy!

Behe and Co. in Canada

This past Friday, I bumped into Dr. Michael Behe, and again on Saturday, along with Drs. Brian Miller (DI), Research Coordinator CSC, and Robert Larmer (UNB), currently President of the Canadian Society of (Evangelical) Christian Philosophers. Venue: local apologetics conference (https://www.diganddelve.ca/). The topic of the event “Science vs. Atheism: Is Modern Science Making Atheism Improbable?” makes it relevant here at TSZ, where there are more atheists & agnostics among ‘skeptics’ than average.

On the positive side, I would encourage folks who visit this site to go to such events for learning/teaching purposes. Whether for the ID speakers or not; good conversations are available among people honestly wrestling with and questioning the relationship between science, philosophy and theology/worldview, including on issues related to evolution, creation, and intelligence in the universe or on Earth. Don’t go to such events expecting miracles for your personal worldview in conversation with others, credibility in scientific publications or in the classroom, if you are using ‘science’ as a worldview weapon against ‘religion’ or ‘theology’. That argument just won’t fly anymore and the Discovery Institute, to their credit, has played a role, of whatever size may still be difficult to tell, in making this shift happen.

A question arises: what would be the first question you would ask or thing you would say to Michael Behe if you bumped into him on the street?

Continue readingPaging Dr. Holloway!

TSZ member Eric Holloway is the latest rising star of the “Intelligent Design” movement. Such a meteoric rise is bound to attract attention and it has indeed caught the eye of veteran biologist Professor Joseph Felsentein who noticed a comment young Eric posted here at TSZ and remarks

Eric Holloway just made a dramatic announcement on The Skeptical Zone, in Dieb’s thread on the number of posts at the ID site Uncommon Descent. In this comment he concludes “At least in my personal interactions with people, it seems like ID has won the debate”.

Professor Felsenstein has a few questions for Eric and hopes he may find the time to respond. I’m just helping out in case Eric has missed Joe’s post at the Panda’s Thumb.

Evolution and Probability

Probabilistic thinking is pervasive in evolutionary theory. It’s not a bad thing, just something that needs to be acknowledged and appropriately handled.

Does gpuccio’s 150-safe ‘thief’ example validate the 500-bits rule?

At Uncommon Descent, poster gpuccio has expressed interest in what I think of his example of a safecracker trying to open a safe with a 150-digit combination, or open 150 safes, each with its own 1-digit combination. It’s actually a cute teaching example, which helps explain why natural selection cannot find a region of “function” in a sequence space in such a case. The implication is that there is some point of contention that I failed to address, in my post which led to the nearly 2,000-comment-long thread on his argument here at TSZ. He asks:

By the way, has Joe Felsestein answered my argument about the thief? Has he shown how complex functional information can increase gradually in a genome?

Gpuccio has repeated his call for me to comment on his ‘thief’ scenario a number of times, including here, and UD reader “jawa” taken up the torch (here and here), asking whether I have yet answered the thief argument), at first dramatically asking

Does anybody else wonder why these professors ran away when the discussions got deep into real evidence territory?

Any thoughts?

and then supplying the “thoughts” definitively (here)

we all know why those distinguished professors ran away from the heat of a serious discussion with gpuccio, it’s obvious: lack of solid arguments.

I’ll re-explain gpuccio’s example below the fold, and then point out that I never contested gpuccio’s safe example, but I certainly do contest gpuccio’s method of showing that “Complex Functional Information” cannot be achieved by natural selection. gpuccio manages to do that by defining “complex functional information” differently from Szostak and Hazen’s definition of functional information, in a way that makes his rule true. But gpuccio never manages to show that when Functional Information is defined as Hazen and Szostak defined it, that 500 bits of it cannot be accumulated by natural selection.

Eric Holloway needs our help (new post at Panda’s Thumb)

Just a note that I have put up a new post at Panda’s Thumb in response to a post by Eric Holloway at the Discovery Institute’s new blog Mind Matters. Holloway declares that critics have totally failed to refute William Dembski’s use of Complex Specified Information to diagnose Design. At PT, I argue in detail that this is an exactly backwards reading of the outcome of the argument.

Commenters can post there, or here — I will try to keep track of both.

There has been a discussion of Holloway’s argument by Holloway and others at Uncommon Descent as well (links in the PT post). gpuccio also comments there trying to get someone to call my attention to an argument about Complex Functional Information that gpuccio made in the discussion of that earlier. I will try to post a response on that here soon, separate from this thread.

Abusing Bayes

I am hoping that some members here are familiar with Bayes’ Theorem and willing to share their knowledge or at the very least interested enough in the topic to do some research and share their opinions.

– What is Bayes Theorem

– What can it tell us

– How does it work

– Can Bayes’ Theorem be abused and if so how

Defending the validity and significance of the new theorem “Fundamental Theorem of Natural Selection With Mutations, Part II: Our Mutation-Selection Model

Defending the validity and significance of the new theorem “Fundamental Theorem of Natural Selection With Mutations, Part II: Our Mutation-Selection Model

– Bill Basener and John Sanford

Joe Felsenstein and Michael Lynch (JF and ML) wrote a blog post, “Does Basener and Sanford’s model of mutation vs selection show that deleterious mutations are unstoppable?” Their post is thoughtful and we are glad to continue the dialogue. We previously wrote a first part of a response to their post, focusing on the impact of R. A. Fisher’s work. This is the second part of our response, focusing on the modelling and mathematics. Our paper can be found at: https://link.springer.com/article/10.1007/s00285-017-1190-x Continue reading

Defending the validity and significance of the new theorem “Fundamental Theorem of Natural Selection With Mutations, Part I: Fisher’s Impact

– Bill Basener and John Sanford

Joe Felsenstein and Michael Lynch (JF and ML) wrote a blog post, “Does Basener and Sanford’s model of mutation vs selection show that deleterious mutations are unstoppable?” Their post is thoughtful and we are glad to continue the dialogue. This is the first part of a response to their post, focusing on the impact of R. A. Fisher’s work. Our paper can be found at: https://link.springer.com/article/10.1007/s00285-017-1190-x

First, a short background on our paper:

The primary thesis of our paper is that Fisher was wrong, in a fundamental way, in his belief that his theorem (“The Fundamental Theorem of Natural Selection”), implied the certainty of ongoing fitness increase. His claim was that mutations continually provide variance, and selection turns the variance into fitness increase. Central to his logic was that collectively; mutations have a net zero effect on fitness. While Fisher assumed mutations are collectively fitness-neutral, it is now known that the vast majority of mutations are deleterious. So mutations can potentially push fitness down – even in the presence of selection. Continue reading

Chance and Selection

Darwin’s conforming of his theory to the old vera causa ideal shows that the theory of natural selection is probabilistic not because it introduces a probabilistic law or principle, but because it invokes a probabilistic cause, natural selection, definable as nonfortuitous differential reproduction of hereditary variants.

A Problem to Solve

Evolution is often presented as problem-solving. Genetic algorithms are often offered as proofs of evolution’s ability to solve problems. Genetic algorithms are as search algorithms.

As one book says:

Fundamentally, all evolutionary algorithms can be viewed as search algorithms which search through a set of possible solutions looking for the best – or “fittest” – solution.

Tom has asked me to specify a problem independently from the evolutionary process. Now I have to admit that I don’t really understand what that means. But I like Tom and I have a lot of respect for him, so I want to give it my best shot and see where it takes us. I’m also hoping this will shed some light on claims about how problem-solving genetic algorithms are designed to solve a particular problem.

Determining Probability

There’s been some debate here at TSZ recently about probability and the interpretation of probability.

I took some flak (my personal subjective opinion) for attempting to distinguish between calculating probabilities and estimating probabilities.

Yet in recent reading I came across this bit of text:

How do you determine the probability that a given event will occur? There are two ways: You can calculate it theoretically, or you can estimate it experimentally by performing a large number of trials.

– Probability: For the Enthusiastic Beginning. p. 335

Introduction to Evolutionary Informatics

Subjects: Evolutionary computation. Information technology–Mathematics.1

Yes, Tom English was right to warn us not to buy the book until the authors establish that their mathematical analysis of search applies to models of evolution.

But some of us have bought (or borrowed) the book nevertheless. As Denyse O’Leary said: It is surprisingly easy to read. I suppose she is right, as long as you do not try to follow their conclusions, but accept it as Gospel truth.

In the thread Who thinks Introduction to Evolutionary Informatics should be on your summer reading list? at Uncommon Descent, there is a list of endorsements – and I have to wonder if everyone who endorsed the book actually read it. “Rigorous and humorous”? Really?

Dembski, Marks, and Ewert will never explain how their work applies to models of evolution. But why not create at list of things which are problematic (or at least strange) with the book itself? Here is a start (partly copied from UD):

Continue reading

Two planets with life are more miraculous than one

The Sensuous Curmudgeon, who presently cannot post to his weblog, comments:

This Discoveroid article is amazing. Could Atheism Survive the Discovery of Extraterrestrial Life?. I wish I could make a new post about it. They say that if life is found elsewhere, that too is a miracle, so then you gotta believe in the intelligent designer. They say:

“The probability of life spontaneously self-assembling anywhere in this universe is mind-staggeringly unlikely; essentially zero. If you are so unquestioningly naïve as to believe we just got incredibly lucky, then bless your soul.”

Actually, “they” who posted at Evolution News and Views is someone we all love dearly, and see occasionally in the Zone — that master of arguments from improbability, Kirk Durston.

Evolution and Functional Information

Here, one of my brilliant MD PhD students and I study one of the “information” arguments against evolution. What do you think of our study?

I recently put this preprint in biorxiv. To be clear, this study is not yet peer-reviewed, and I do not want anyone to miss this point. This is an “experiment” too. I’m curious to see if these types of studies are publishable. If they are, you might see more from me. Currently it is under review at a very good journal. So it might actually turn the corner and get out there. An a parallel question: do you think this type of work should be published?

I’m curious what the community thinks. I hope it is clear enough for non-experts to follow too. We went to great lengths to make the source code for the simulations available in an easy to read and annotated format. My hope is that a college level student could follow the details. And even if you can’t, you can weigh in on if the scientific community should publish this type of work.

Functional Information and Evolution

http://www.biorxiv.org/content/early/2017/03/06/114132

“Functional Information”—estimated from the mutual information of protein sequence alignments—has been proposed as a reliable way of estimating the number of proteins with a specified function and the consequent difficulty of evolving a new function. The fantastic rarity of functional proteins computed by this approach emboldens some to argue that evolution is impossible. Random searches, it seems, would have no hope of finding new functions. Here, we use simulations to demonstrate that sequence alignments are a poor estimate of functional information. The mutual information of sequence alignments fantastically underestimates of the true number of functional proteins. In addition to functional constraints, mutual information is also strongly influenced by a family’s history, mutational bias, and selection. Regardless, even if functional information could be reliably calculated, it tells us nothing about the difficulty of evolving new functions, because it does not estimate the distance between a new function and existing functions. Moreover, the pervasive observation of multifunctional proteins suggests that functions are actually very close to one another and abundant. Multifunctional proteins would be impossible if the FI argument against evolution were true.

Dice Entropy – A Programming Challenge

Given the importance of information theory to some intelligent design arguments I thought it might be nice to have a toolkit of some basic functions related to the sorts of calculations associated with information theory, regardless of which side of the debate one is on.

What would those functions consist of?