Subjects: Evolutionary computation. Information technology–Mathematics.

In “Evo-Info 4: Non-Conservation of Algorithmic Specified Complexity,” I neglected to explain that algorithmic mutual information is essentially a special case of algorithmic specified complexity. This leads immediately to two important points:

- Marks et al. claim that algorithmic specified complexity is a measure of meaning. If this is so, then algorithmic mutual information is also a measure of meaning. Yet no one working in the field of information theory has ever regarded it as such. Thus Marks et al. bear the burden of explaining how they have gotten the interpretation of algorithmic mutual information right, and how everyone else has gotten it wrong.

- It should not come as a shock that the “law of information conservation (nongrowth)” for algorithmic mutual information, a special case of algorithmic specified complexity, does not hold for algorithmic specified complexity in general.

My formal demonstration of unbounded growth of algorithmic specified complexity (ASC) in data processing also serves to counter the notion that ASC is a measure of meaning. I did not explain this in Evo-Info 4, and will do so here, suppressing as much mathematical detail as I can. You need to know that a binary string is a finite sequence of 0s and 1s, and that the empty (length-zero) string is denoted ![]() The particular data processing that I considered was erasure: on input of any binary string

The particular data processing that I considered was erasure: on input of any binary string ![]() the output

the output ![]() is the empty string. I chose erasure because it rather obviously does not make data more meaningful. However, part of the definition of ASC is an assignment of probabilities to all binary strings. The ASC of a binary string is infinite if and only if its probability is zero. If the empty string is assigned probability zero, and all other binary strings are assigned probabilities greater than zero, then the erasure of a nonempty binary string results in an infinite increase in ASC. In simplified notation, the growth in ASC is

is the empty string. I chose erasure because it rather obviously does not make data more meaningful. However, part of the definition of ASC is an assignment of probabilities to all binary strings. The ASC of a binary string is infinite if and only if its probability is zero. If the empty string is assigned probability zero, and all other binary strings are assigned probabilities greater than zero, then the erasure of a nonempty binary string results in an infinite increase in ASC. In simplified notation, the growth in ASC is

![]()

for all nonempty binary strings ![]() Thus Marks et al. are telling us that erasure of data can produce an infinite increase in meaning.

Thus Marks et al. are telling us that erasure of data can produce an infinite increase in meaning.

In Evo-Info 4, I observed that Marks et al. had as “their one and only theorem for algorithmic specified complexity, ‘The probability of obtaining an object exhibiting ![]() bits of ASC is less then [sic] or equal to

bits of ASC is less then [sic] or equal to ![]() ’” and showed that it was a special case of a more-general result published in 1995. George Montañez has since dubbed this inequality “conservation of information.” I implore you to understand that it has nothing to do with the “law of information conservation (nongrowth)” that I have addressed.

’” and showed that it was a special case of a more-general result published in 1995. George Montañez has since dubbed this inequality “conservation of information.” I implore you to understand that it has nothing to do with the “law of information conservation (nongrowth)” that I have addressed.

Now I turn to a technical point. In Evo-Info 4, I derived some theorems, but did not lay them out in “Theorem … Proof …” format. I was trying to make the presentation of formal results somewhat less forbidding. That evidently was a mistake on my part. Some readers have not understood that I gave a rigorous proof that ASC is not conserved in the sense that algorithmic mutual information is conserved. What I had to show was the negation of the following proposition:

(False) ![]()

for all binary strings ![]() and

and ![]() for all probability distributions

for all probability distributions ![]() over the binary strings, and for all computable [ETA 12/12/2019: total] functions

over the binary strings, and for all computable [ETA 12/12/2019: total] functions ![]() on the binary strings. The variables in the proposition are universally quantified, so it takes only a counterexample to prove the negated proposition. I derived a result stronger than required:

on the binary strings. The variables in the proposition are universally quantified, so it takes only a counterexample to prove the negated proposition. I derived a result stronger than required:

Theorem 1. There exist computable function ![]() and probability distribution

and probability distribution ![]() over the binary strings such that

over the binary strings such that

![]()

for all binary strings ![]() and for all nonempty binary strings

and for all nonempty binary strings ![]()

Proof. The proof idea is given above. See Evo-Info 4 for a rigorous argument.

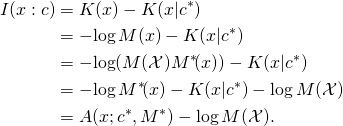

In the appendix, I formalize and justify the claim that algorithmic mutual information is essentially a special case of algorithmic specified complexity. In this special case, the gain in algorithmic specified complexity resulting from a computable transformation ![]() of the data

of the data ![]() is at most

is at most ![]() i.e., the length of the shortest program implementing the transformation, plus an additive constant.

i.e., the length of the shortest program implementing the transformation, plus an additive constant.

Appendix

Some of the following is copied from Evo-Info 4. The definitions of algorithmic specified complexity (ASC) and algorithmic mutual information (AMI) are:

![]()

where ![]() is a distribution of probability over the set

is a distribution of probability over the set ![]() of binary strings,

of binary strings, ![]() and

and ![]() are binary strings, and binary string

are binary strings, and binary string ![]() is a shortest program, for the universal prefix machine in terms of which the algorithmic complexity

is a shortest program, for the universal prefix machine in terms of which the algorithmic complexity ![]() and the conditional algorithmic complexity

and the conditional algorithmic complexity ![]() are defined, outputting

are defined, outputting ![]() The base of logarithms is 2. The “law of conservation (nongrowth)” of algorithmic mutual information is:

The base of logarithms is 2. The “law of conservation (nongrowth)” of algorithmic mutual information is:

(1) ![]()

for all binary strings ![]() and

and ![]() and for all computable [ETA 12/12/2019: total] functions

and for all computable [ETA 12/12/2019: total] functions ![]() on the binary strings. As shown in Evo-Info 4,

on the binary strings. As shown in Evo-Info 4,

![]()

where the universal semimeasure ![]() on

on ![]() is defined

is defined ![]() for all binary strings

for all binary strings ![]() Here we make use of the normalized universal semimeasure

Here we make use of the normalized universal semimeasure

![]()

where ![]() Now the algorithmic mutual information of binary strings

Now the algorithmic mutual information of binary strings ![]() and

and ![]() differs by an additive constant,

differs by an additive constant, ![]() from the algorithmic specified complexity of

from the algorithmic specified complexity of ![]() in the context of

in the context of ![]() with probability distribution

with probability distribution ![]()

Theorem 2. Let ![]() and

and ![]() be binary strings. It holds that

be binary strings. It holds that

![]()

Proof. By the foregoing definitions,

Theorem 3. Let ![]() and

and ![]() be binary strings, and let

be binary strings, and let ![]() be a computable [and total] function on

be a computable [and total] function on ![]() Then

Then

![]()

Proof. By Theorem 2,

![Rendered by QuickLaTeX.com \begin{align*} &A(f(x); c^*\!, M^*) - A(x; c^*\!, M^*) \\ &\quad = [I(f(x) : c) - \log M^*\!(\mathcal{X})] - [I(x : c) - \log M^*\!(\mathcal{X})] \\ &\quad = I(f(x) : c) - I(x : c) \\ &\quad \leq K(f) + O(1). \\ \end{align*}](http://theskepticalzone.com/wp/wp-content/ql-cache/quicklatex.com-353887b05406e56e1ebf5a09a11ce566_l3.png)

The inequality in the last step follows from (1).

The Series

Evo-Info review: Do not buy the book until…

Evo-Info 1: Engineering analysis construed as metaphysics

Evo-Info 2: Teaser for algorithmic specified complexity

Evo-Info sidebar: Conservation of performance in search

Evo-Info 3: Evolution is not search

Evo-Info 4: Non-conservation of algorithmic specified complexity

Evo-Info 4 addendum

BruceS,

The idea is that if I want to find your telephone number through an algorithm and not spend the rest of my life searching for it I need the telephone number available to the algorithmic search process. This is a linear 10 digit sequence of digits 0 through 9. This sequence is short but has 10 billion possible ways to arrange it.

Finding a functional AA sequence faces a similar problem. If in the case of the adaptive immune system I have an invading microbe I can use the information from the microbe in order to algorithmically generate a antibody that can bind to it and kill it.

The ID argument is that de novo information is the unique product of a mind. Evolutionary process can modify information and fix those changes in a population but what accounts for de novo information such as a new unique gene sequence.

Seems to be the usual concerns with evolution and info “needed” for (eg) GA searching or no free lunch results. I have nothing new to add to what has been said on these topics.

These ID discussions often stalemate at those concerns and related issues with the physical reasonableness of proposed probability distributions.

It always reduces those basic disagreements.

And speaking of reducing, old math joke:

Given an empty pot, stove, and water tap, how does a mathematician boil water? Fill the pot from the tap, put it on the stove, turn on the stove, wait for boiling.

Now given a pot full of water, stove, and tap, how does that mathematician boil water?

Dump the water out of the pot and it reduces to the first case.

and

You appear to be missing that changes in allele frequency may also involve increases in information. Imagine a population of bacteria, half of which carry an allele R that confers resistance to ampicillin, and half of which carry the sensitive allele S. Now suppose that I plate this population on a petri dish containing ampicillin. After a day, all bacteria with S have died, and the plate will be filled only with colonies of bacteria carrying R. Hence, information about the environment was transferred to the genome of the population while it adapted. This can also be expressed in terms of information theory, like Joe did.

Joe Felsenstein, Tom English, BruceS,

Thanks for all the explanations. It is appreciated, and I suspect not just by me.

Corneel,

So far this has only been asserted. How did you measure that information increased?

Well, you have to infer what is from the derivation — Nemati and Holloway use

is from the derivation — Nemati and Holloway use  both as a set and a random variable (neither defined), and I don’t see that as fine. (I suspect that the lack of explicit definition of the random variable is what tripped up Holloway in (45). He seems to believe that any random variable will do. The derivation requires that the distribution of the random variable be p.) The derivation is otherwise correct, but needlessly weak. I’ll be replacing the inequality with an equality.

both as a set and a random variable (neither defined), and I don’t see that as fine. (I suspect that the lack of explicit definition of the random variable is what tripped up Holloway in (45). He seems to believe that any random variable will do. The derivation requires that the distribution of the random variable be p.) The derivation is otherwise correct, but needlessly weak. I’ll be replacing the inequality with an equality.

Please carry this over to a comment on my next OP.

The results that Eric says apply — (16) and the theorem by Ewert et al. mentioned in my quote of Evo-Info 4, above — in fact apply only if L has the distribution p that is specified as an argument in ASC(L, C, p). As it happens, Ewert et al. also do not indicate in their theorem that X is a random variable — they’ve previously said that it was an event or an object — but you can infer from their proof that X must be a random variable with distribution p of probability over the binary strings. So, again, I think that Eric believes that the results apply more generally than they actually do because they were stated execrably in the first place.

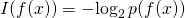

Well, I’ll say that derivation (51) in Section 4.3 is ridiculously overcomplicated. Given that

(43)![Rendered by QuickLaTeX.com \[f\!ASC(x, C, p, f) < I(x) \]](http://theskepticalzone.com/wp/wp-content/ql-cache/quicklatex.com-be1f18af47fa35f31adefc3e8141fcad_l3.png)

for each and every f (and for each and every x), it is almost trivial that

(51)![Rendered by QuickLaTeX.com \[E_F[f\!ASC(x, C, p, F)] < I(x). \]](http://theskepticalzone.com/wp/wp-content/ql-cache/quicklatex.com-61202e775eb093432859ac103e5dd88c_l3.png)

As for Section 4.4, it is complicated. The equalities involving both fASC and ASC, i.e.,(53) and (54), are naked assertions. There is no semblance of proof. But I won’t be going into detail. There’s more than enough in the preceding sections. Life is too short. And, honestly, the work of George Montañez is much more interesting. George is a worthy adversary. Thing is, Eric Holloway comes out to play in cyberspace, and thus gets all the attention. I hope, perhaps unrealistically, to get some people, including you, to genuinely understand — not just take it on my word — that Eric is oblivious to crucial mathematical details, and then to be done with him. [ETA: I mean that I’ll be done with him. You can go on playing with him as long as you like.]

The work is of much higher quality than that of Section 4. It is related to Nemati’s thesis, and may even come from his thesis (which, like Holloway’s dissertation, is embargoed). There are some errors, but none that are terribly embarrassing. A problem for me, in writing an OP in response to the article, is that I don’t want to lump Nemati with Holloway. I have never painted with a broad brush. It appears that Nemati is as competent as one would expect a master’s level ECE to be.

Yeah, they can’t tell you much about the distribution of the statistic. But the derivation of expected ASC (not OASC) for a special case is a nice idea, and is fairly well done. It’s much, much better work than anything in Section 4. If you look closely, however, you’ll see that Nemati (I’m sure it’s his work) loses the context C along the way, and ends up working with K(x) in the derivation. He mentions nowhere that the C is the million-bit sequence of bits obtained by sampling the true distribution. That’s actually quite important to know, if you’re going to understand his results. The compressor is “trained” on the million-bit sample C, and then compresses x. That’s an upper bound on K(x|C). [ETA: I mean that the length of compressed-x is an upper bound on K(x|C).] So, loosely, the “background knowledge” here is a sample of the true distribution. Finding some simple case for which you can obtain expected ASC analytically, and then comparing mean observed ASC to (analytic) expected ASC is a nice way to proceed. I actually respect that approach.

Now, the truly sad thing about Eric’s false claim in (45),

with L generally not distributed according to p, is that Table 2, “Expected ASC of bitstrings generated from q distribution, measured using p distribution as chance hypothesis,” shows that expected ASC is positive when the true distribution q diverges from the hypothesized distribution p. What I’m saying about (45) is, loosely, that the distribution of is q, not the distribution p of

is q, not the distribution p of  I cannot see how Holloway, if he’d had any understanding at all of Nemati’s analytic results, would have written the inequality above, in contradiction of those results. And I wonder why Nemati said nothing about the contradiction.

I cannot see how Holloway, if he’d had any understanding at all of Nemati’s analytic results, would have written the inequality above, in contradiction of those results. And I wonder why Nemati said nothing about the contradiction.

You’re making a mistake in thinking Bill said something meaningful. Trying to understand what he said is a waste of time, he doesn’t even know himself. It’s just blather he spews out to give the appearance of having something to add.

That’s the assertion, not the argument. There is no argument that actually shows this.

Functional protein sequences can evolve from non-coding DNA. Basically by chance. This has been demonstrated in experiments.

You’ve had your attention directed to this paper multiple times:

Knopp M, Gudmundsdottir JS, Nilsson T, König F, Warsi O, Rajer F, Ädelroth P, Andersson DI. De Novo Emergence of Peptides That Confer Antibiotic resistance. MBio. 2019 Jun 4;10(3). pii: e00837-19. [doi: 10.1128/mBio.00837-19]

See my long response to BruceS, a few comments up the page.

One could argue with that, and say that C is a decompressor tuned to the million-bit sample of the true distribution. But I think (not carefully enough) that there’s an O(1) difference in the two approaches.

Tom English,

The expected result from you here is different then Eric’s. This has substance and would be interesting to resolve if he returns. There appears to be some uncertainty in his paper base on his test.

Thanks for taking the time to post this very helpful explanation.

If you ever wanted to spend the time on it, your analysis of that Montañez work would be welcomed

As I am sure you have noticed, I’ll take any opportunity to shoot my mouth off about something that I think I might know something about.

I hardly ever make errors like that. I’m actually very good at keeping symbols straight. (It’s a carryover from programming, which I do much better than math.) In this case, I got cute, and attempted a copy-once-and-paste-thrice shortcut. I was working in a restaurant. Evidently something distracted me before I changed the little x’s to big X’s.

The embarrassing part is that I didn’t proofread the math carefully before letting the post go. It was quite a bit of work, and I confess that in the end, I just wanted to be done with it.

Not to make an excuse, I have to say that something quite different is going on when the errors slip past an editor with a Harvard Ph.D. in math, as well as two or three reviewers.

I started a post long ago, but never finished it. I think Joe is interested now. He’s a first-rate statistician, in addition to being a world-class theoretical biologist. I hope he will write something in response to Montañez, with or without me as coauthor. Right now, we’ve got a more-important project that I have delayed greatly. We’ll return to it, once we’ve had our say about Holloway.

Joe’s post is up at PT now. By the way, we’re not coordinating our efforts. We independently got fed up with Holloway.

So you get that point! I’m pleasantly surprised. I won’t give up on you, just yet. 😉

Well, we do discuss these matters, back-channel, and in somewhat more pungent terms than here. Tom has been helpful in critiquing drafts of my postings.

Right now he is trying to help me as I struggle to get the Pooh image that heads up my article at PT to be some reasonable size, such as its original 254×391 pixels. It seems instead to expand to fill all available space. I have gone on the web and found lots of advice involving HTML IMG tags and/or Github Markdown. None has worked. Tom sent a very sophisticated rewrite of my IMG tag. Alas, that did not work either.

Actually Montañez’s paper is generally OK. He makes it sound like it is all about information but it is really an application of Neyman/Pearson testing theory. He has a null hypothesis and then takes the specification function and divides it by the sum of its values at all points in the space, so as to make it behave like an alternative distribution. Then he applies NP’s testing machinery to define a rejection region for the null hypothesis, one which has values of the specification function that are as high as possible, so as to maximize power. Except that since the aternative distribution is not really a distribution, one can’t talk about power.

The -log2 choice then gives a result 2^(-alpha}, but that is not as mystical as seems, as all the 2-to-the-minus stuff comes from the arbitrary choice of taking a log to the base 2. One need not take any log, in which case he is just showing that the probability of being in the rejection region is (duh) alpha, since the region was chosen to achieve that, because that’s what NP understandably wants to achieve.

The

Joe Felsenstein,

Thanks for the post. Let me put down some information that may indicate a high level of specified complexity.

Alpha Actin 377 AA length

Alignment humans mice rabbits. 100%

Data like this indicates a high level of functional specificity in my opinion.

The possibility of these alignments is not much related to functional specificity, as many people have argued in the Gpuccio threads here. They are right. I am not going to go into this as it has nothing to do with the present discussion.

Joe Felsenstein,

Are you claiming that there is low specificity? This contradicts your claim. The prior arguments are not valid here. This is a universal skeletal protein it is not an enzyme.

Yeah, but we decided independently to do posts. There’s no “you hit him here, I’ll hit him there” strategy. I’d never think to make the points that you do in your post. I’m a computer scientist responding to EricMH as an electrical and computer engineer.

This ^^^^^ Sometimes I think he types just because he likes the clickity-clickity sound the keys make.

In this case it’s Tom trying to straighten out EricMH on conservation of ASC, while I say “Well, in any case, even if it is conserved, that conservation means nothing as to whether evolution can improve fitness”. Which makes the other issue moot as ASC doesn’t help understand whether there are constraints on evolution.

I did not, though if you insist I can probably put some numbers on that. But really this is just a toy example to help one understand how an external selection pressure can increase the proportion of genomes that match the specification “has high fitness”. In colloquial terms, information about the environment “there is ampicillin present” gets transferred to the genomes of the population “The R allele is fixed”.

This is the solution to Eric’s puzzle: where does all this information come from? It is already out there, and gets committed to the genome by feedback through differential survival and reproduction.

I suggest we leave Eric’s military grade out of the discussion. He has not claimed to speak for the military nor has he claimed that his membership provides him particular expertise here. I happen to have a somewhat similar military background and I do not share his views.

Corneel,

Erics puzzle is to algorithmically generate linear functional information. I suggested something as vague as “fitness” as a target will not yield new information.

If you can build an algorithm without a linear sequence as a target you go along way to really falsifying Dembski’s concept of no free lunch for specified information.

Several sequences that have “survived” over long periods of time exist in the population of several different species. I shared with Joe that the same sequence of alpha actin, a skeletal muscle protein, has stayed in tact for 80 million years assuming common descent among mammals is true. 377 amino acids and not one has mutated.

A simple program. Build a computerized algorithm that generates the sequence of A Actin with “fitness” as the target. You can start with only 2 amino acids available for every position either hydrophobic or hydrophilic based on the A Actin sequence. This will demonstrate 377 bits of FI generated algorithmically with “fitness” as the target.

… says the guy who claims “mind” creates proteins.

Dr. English, you do engage in a lot of mathematical misdirection, so if you are really serious in your criticism you need to submit a formal writeup to Bio Complexity. Otherwise, leaving it as these blog posts mean you can escape accountability for misrepresenting what is going on. Which is convenient, of course, and you can spew all sorts of insults at people such as myself, as well as preach to the choir. But beyond that, you cannot really achieve anything substantial with your criticism. To do that, you need to submit a scholarly criticism to Bio Complexity, which they will certainly print if it is valid.

Dr. Felsenstein, the same goes for your criticisms. I posted a brief comment over at PT to give you some leads, but there is already a lot of work that has been done by Dembski et. al. that you are more than welcome to respond to with a scholarly article.

I’ll also add this is not an onerous burden. Both of you have already written up your criticisms extensively. From there, it’ll take you about the same amount time as writing yet another blog post to consolidate your writing and submit it to Bio-C. So, whether you choose to follow through with a real criticism indicates just how seriously you regard your own work. If you don’t follow through, this is a clear indicator that you know your criticisms are without substance in the eyes of the experts in the matter.

Anyways, as you two have decided to stop criticizing my work, and I don’t see anything new here, this will be my last comment on the subject until I see a new substantial criticism.

I suggest we leave titles out of the discussion, just as you do here.

My references, in one and only one post, to “Captain Holloway” were a bit of private humor. I’d say that Holloway is a major, were it not that he’s only a minor pain.

Actually, my joke is multifaceted. I’m not going to try to explain everything about it. But I’ll mention that conservative Christians have precious little respect for the expertise of those who challenge their beliefs, and huge respect for anyone of their own kind who puts “Dr.” in front of his or her name, irrespective of whether the individual’s field of study is related to the topic under consideration. At the moment, I’m thinking particularly of Focus on the Family’s “The Truth Project,” a 13-part video series on the Christian worldview. Each part is a staged classroom lecture of respectful, Bible-believing students by a great authority on an incredible range of topics, Dr. Del Tackett (never just Del Tackett). With some googling, I found that “Dr. Del Tackett” was sometimes “Del Tackett, D.M., Colorado Technical University.” Then, visiting the website for the university, I learned that “D.M.” was the abbreviation of the Doctor of Management degree.

I have never seen anyone shift gears more obviously, after earning a doctorate, than did Eric Holloway. He began spouting half-baked notions hither and yon in cyberspace, and seemed genuinely to believe that was entitled to respect by his newly earned title. He evidently wants to be a “Dr. Del Tackett.”

Now, I happen to know that Captain Holloway’s status, in day-to-day military life, was virtually unchanged. (I come from an Air Force family. My summer jobs, as an undergrad, were on Air Force bases. I’ve spent lots and lots of time with Air Force officers, including two who were graduate students of mine. As a professor, I spent a summer working for the Army Corps of Engineers, under the Summer Faculty Research Program. Later, one of my research collaborators was a retired Army colonel who had earned his Ph.D., and had served as head of computer security for the National Security Agency, while on active duty.) I find the contrast of Eric’s identity in the real world and the identity he is trying to construct in cyberspace quite humorous.

Then you understand the contrast I’m talking about, whether or not you find it amusing.

I’ll throw in another remark on something that few people understand. Most of us who have earned a doctoral degree don’t make a big deal of it. We can remember when it was our objective. We can remember how hard we worked to achieve the objective. However, that seems rather strange, on reflection, because we know now that the doctorate was not the end, but instead just a beginning. Particularly, those of us who have taught in our disciplines recognize that we now understand the subject matter vastly better than we did on completion of our doctoral work. (And it’s rather funny to recall that the traditional approach to instruction in the military is “watch one, do one, teach one.”)

Yesterday, Tom made a prediction:

Today, Eric fulfilled that prediction:

And:

He concluded with one of his trademark flounces:

As I said over there, none of the Dembski arguments that you cite seem to have anything to do with the question I was raising there, how ASC can be used to place any constraint on evolution. As far as I can see, it can’t. I’ve asked for links or references there and will wait for that.

Above, I called your bluff that I had omitted essential context in your article, and pointed out that if it existed, you at least could tell the “general reader” (your term) where to find it. You vanished. Now you reappear with a new bluff, accusing me of “a lot of mathematical misdirection,” but cannot cite a single instance. You’re not dumb, but you certainly are a slow learner.

And lend my good name to an unreputable (and stunningly incestuous) journal created to disguise ID as science, after ID was judged not to be science in the Kitzmiller case? Somehow you have neglected to mention that the editor-in-chief of that journal, Robert J. Marks II, was your dissertation adviser (and Nemati’s thesis adviser). Over half of the articles published in the journal have Marks’s former advisees as authors.

Oh, so Bio-Complexity holds authors accountable, does it? Two of the past three articles it published, including yours, drew material from my post “Evo-Info 4: Non-Conservation of Algorithmic Specified Complexity,” but did not cite the source. That’s scholarly misconduct, Eric M. Holloway, CPT USAF, Ph.D. And I’m going to hazard a guess that the editor-in-chief of Bio-Complexity, humiliated by my post, will not allow references to it in the journal. If so, then he is also guilty of scholarly misconduct.

On this very page of comments, I have spoken well of your coauthor, David Nemati. I said outright that a problem for me, in responding to your article, is that I do not want to lump him with you. On this very page of comments, Joe Felsenstein and I both have spoken well of George Montañez.

Rolling on the floor, laughing my ass off.

Yet another atrocious bluff. On this very page of comments I posted detailed criticisms that I previously had not shared. As for my preaching to the choir, well, one of your fellow ID proponents saw that I had made a valid point, and indicated that you should respond:

Eric in case you haven’t noticed BIO-Complexity is not a scholarly journal. It’s a non peer-reviewed vanity publication put out by the DI specifically for publishing anti-evolution ID-Creationist horsecrap. BIO-Complexity is not recognized as a legitimate scientific journal by any scientific organization anywhere on the planet.

Before you start to squawk, having your fellow IDiots at the DI “review” the science-free garbage at B-C does NOT count as scientific peer review. If you had anything of substance to offer you’re submit it to a real science journal, not you little play journal. But you don’t have anything besides the usual Creationist bullshit and bluster.

Exactly right. Basener and Sanford scored when they managed to get bogus claims about Fisher’s Fundamental Theorem of Natural Selection through peer review at the Journal of Mathematical Biology. Looking at dates (Basener’s software, Sanford’s note in the 4th edition of Genetic Entropy that a paper was on the way), as well as the text, it appears that the paper was rejected by two or three journals, and accrued some revisions, before it was accepted by JMB. If Basener and Sanford had managed only a Bio-Complexity publication, nobody would have jumped for joy over the “accomplishment.”

Grownup creationists understand that Bio-Complexity is a journal of last resort.

In the beginning, Bio-Complexity did not have a peer-review process. Now it does. But consider the peers.

I agree that this is what Eric’s post lack, as far as I can see.

I personally do not see much value in you continuing to post on Eric’s paper, unless you think you need to clarify your arguments as to why the claimed proofs in the paper fail.

I would be interested in what you think of the math and claims in his posts from late last your that he links at the PT discussion.

If there are any new mathematical ideas in the Montañez paper, even a short post pointing those out would be helpful. However, I suspect that I am the only one at TSZ (except possibly for Keith?) interested in the math for its own sake. So you may not want to both for such a small potential audience.

I can definitely promise to “like” your post if that is any incentive.

I wonder if Bio-Complexity has ever rejected a paper by the people who publish there. Have Holloway, Marks, Dembski, Behe, Axe, Gauger, or any of the usual suspects, ever submitted a paper for review in Bio-Complexity, and been told their paper isn’t worthy of publication for some reason?

We already know that one of the ways the reviewing happens over there is that authors collaborate with “reviewers” to make the whole thing sound more rhetorically compelling to laypeople.

See my response to Eric at The Panda’s Thumb.

His “Mutual Algorithmic Information [sic], Information Non-growth, and Allele Frequency” is dense in errors. The evolutionary “algorithm” that he describes is not an algorithm: for all positive integers n, the probability that the procedure halts in n or fewer iterations is less than 1. Algorithmic complexity is defined in terms of halting programs for a deterministic universal computer U. Why does Eric think that a program running on a deterministic computer can randomly flip bits? If Eric wants to approximate the randomized procedure with a pseudo-random program, then he presumably wants to feed it a random seed (for the pseudo-random number generator) along with the random initial genome. But then the program does not necessarily halt, which is to say that it does not implement an algorithm. So how is it that Eric thinks he is measuring algorithmic mutual information? There’s more, and I’m desperately in need of sleep. But that’s enough for you to know that Eric, as in his Bio-Complexity article, is fabulously inattentive to crucial details.

Now for a high-level response… Eric’s post essentially dresses in a threadbare tuxedo the old misrepresentation of evolutionary theory as a claim that everything happens by chance. Eric takes “everything happens by chance” to mean that the universe is a universal computer, and is fed a randomly generated program. He then knocks down that straw-man claim — which, of course, no one has ever made. Please have another look at his post, ignoring as much of the stuff about algorithmic information as you can. You’ll see, toward the end, where he feeds the universal computer U a random program. Why would he do that? What is it supposed to model? He’s trying to establish that an evolutionary program requires an intelligent programmer.

This is old stuff, but it has never before been as daft as Eric makes it. Going back at least as far as No Free Lunch, Dembski said, “Oh, yeah! If the evolutionary search produces an outcome that is high in specified complexity, then where did the evolutionary search come from? Got you there, you sin-blinded evilutionists!” You perhaps are familiar with his search for a search regress. When Dembski, Ewert, and Marks talk about conservation of [active] information, that is an abbreviation of conservation of information in the search for a search. Loosely, the idea is that it takes at least as much information to find a good search as it takes for find the target that is searched for. Their main CoI theorem is actually just an obfuscated form of something embarrassingly elementary, Markov’s inequality. (Joe Felsenstein recognized this after Alan Fox made a comment that led me to explain active information differently than I ever had before. Sometimes good things happen in the Zone.) If you do not recall Markov’s inequality from school, then have a look at the Wikipedia article. The result is a simple consequence of the definition of the expected value of a random variable. I’ve long had a high-school level derivation written up (it runs something like five lines, for the discrete case). I’ll probably include it in Evo-Info 6.

The bit of making the universe out to be a computer running a program, and demanding that the naturalist account for the origin of the program, is something I first noticed in 2012 or so. I think it comes from reification of computational models of evolution. That’s something for you to think about. I can’t go into it now.

Sorry, but it will be a while before I get to the rest of your comment, as well as your comment in the other thread.

Thanks again, Tom.

Other than reading Joe’s and Shallitt’s stuff, I have not spent much time with Demski and CSI, mainly the associated math I already knew about or is not as interesting to me as the computability and KC stuff.

I don’t know, of course, about rejections. But I’m pretty sure that someone made Nemati and Holloway do revisions. First, there’s the difference in acceptance and submission dates (for a paper that’s only ten pages long). Second, based on what Holloway was saying a couple months prior to the submission date, and on the opening of Section 4 (introduction and 4.1), I suspect that he originally argued simply that I was evaluating the component of ASC incorrectly, and that he did not define fASC. I hate saying anything good about the journal, but I honestly believe that someone made him introduce fASC. That would explain why you see in the text only references to ASC, while all of the derivations are for fASC. I think that Eric is refusing to admit that he didn’t get to publish, even in Bio-Complexity, what he “just knew” had to be true. So he’s now going around saying that he established conservation of ASC, even though he actually put a trivial upper bound on very different quantity, fASC.

component of ASC incorrectly, and that he did not define fASC. I hate saying anything good about the journal, but I honestly believe that someone made him introduce fASC. That would explain why you see in the text only references to ASC, while all of the derivations are for fASC. I think that Eric is refusing to admit that he didn’t get to publish, even in Bio-Complexity, what he “just knew” had to be true. So he’s now going around saying that he established conservation of ASC, even though he actually put a trivial upper bound on very different quantity, fASC.

I didn’t know. Where did you learn that?

I think it was Ann Gauger who posted some of the feedback she got on one of hers and Axe’s publications, over on peacefulscience. IIRC one of the reviewers was that fundamentalist Christian, pro-ID biochemist from Finland (Matti Leisola?). Even the small part of their exchange she revealed read like a propaganda collaboration, though I didn’t get the impression they thought so themselves. I’ll try and find it again.

Hey, come to think of it, that would explain the title of, and introduction to, George Montañez’s article, which don’t go with the rest of the paper. I’d thought that the shenanigans were more characteristic of Bob Marks than of George. And Marks in fact was listed as the editor of that particular article. (Of course, George should not have complied.)

So, thank you! I think I understand much better now what I’ve been looking at.

BruceS,

OK, I lied. But I’m really, really, very truly gone now.

Alright I found it, it’s in this post from Ann Gauger here: https://discourse.peacefulscience.org/t/gauger-answering-art-hunt-on-real-time-evolution/5313/49.

I can’t find where I got the impression that the reviewer was Matti Leisola from, I must have misremembered that part.

The view of the universe as a computer is described and critiqued here at SEP.

https://plato.stanford.edu/entries/computation-physicalsystems/#UniComSys

But even if one wants to promote the view that the universe computes, one has to formulate it in terms of quantum computation. This view often goes with the claim that the universe is fundamentally quantum information.

I don’t know have any philosopher of science who argues that some aspect of the universe computes the models of population genetics.

From what I have read of him, Eric has not explicitly made this claim about universal computing, but rather claimed that mathematics dictates the behavior of the universe. He appeals to Wigner’s puzzle of the unreasonable effectiveness of mathematics in physics.

But it is we who find the appropriate mathematical models by doing science to verify them. We test any proposed mathematics by embedding it in a mathematical, scientific model. You cannot ignore science and just take the mathematics you like and claim reality must follow it, especially where that mathematics makes conservation claims based on proofs which are controversial.

If existence is mathematical, then the math is too subtle for mere humans.

There are flaws and cracks, all the way down.

Or Tom may not regard the reviewers at Bio-C as having the needed expertise. There needs to be someone you both accept as an expert reviewer.

One candidate might be George Montañez.

But I think there is more work you could do to try to convince Tom. As I understand him, he claims that equation 45 is not proven because it uses the distribution p instead of a distribution which accounts for L. See here, esp, the image at the bottom of Tom’s post:

I have not seen a post where you address the content of Tom’s concern. To me, such a reply could consist in:

– Saying that you do not understand what Tom is claiming or how it applies and asking for further details

OR

– Saying you do understand Tom’s concern, explaining it in your own words so Tom can verify you understand what he means, and then showing how Tom’s criticism fails

BruceS,

If we could come to agreement on this simple point that would be progress.

Thanks, Rumraket.