Recent discussions of genetic algorithms here and Dave Thomas’ evisceration of Winston Ewert’s review of several genetic algorithms at The Panda’s Thumb prompted me to dust off my notes and ev implementation.

Introduction

In the spring of 1984, Thomas Schneider submitted his Ph.D thesis demonstrating that the information content of DNA binding sites closely approximates the information required to identify the sites in the genome. In the week between submitting his thesis and defending it, he wrote a software simulation to confirm that the behavior he observed in biological organisms could arise from a subset of known evolutionary mechanisms. Specifically, starting from a completely random population, he used only point mutations and simple fitness-based selection to create the next generation.

The function of ev is to explain and model an observation about natural systems.

— Thomas D. Schneider

Even with this grossly simplified version of evolution, Schneider’s simulator, tersely named ev, demonstrated that the information content of a DNA binding site, ![]() , consistently and relatively quickly evolves to be approximately equal to the information required to identify the binding sites in a given genome,

, consistently and relatively quickly evolves to be approximately equal to the information required to identify the binding sites in a given genome, ![]() , just as is seen in the biological systems that were the subject of his thesis.

, just as is seen in the biological systems that were the subject of his thesis.

Schneider didn’t publish the details of ev until 2000, in response to creationist claims that evolution is incapable of generating information.

Core Concepts

Before discussing the implementation, it’s important to understand exactly what is being simulated. Dr. Schneider’s thesis is quite readable. The core concepts of ev are binding sites, ![]() , and

, and ![]() .

.

Binding Sites

A binding site is a location on a strand of DNA or RNA where a protein can attach (bind). Binding sites consist of a sequence of nucleotides that together provide the necessary chemical bonds to hold the protein.

A good example of binding sites in action is the synthesis of messenger RNA (mRNA) by RNA polymerase (RNAP). RNAP binds to a set of a few tens of base pairs on a DNA strand which triggers a series of chemical reactions that result in mRNA. This mRNA is then picked up by a ribosome (which also attaches to a binding site) that transcribes a protein from it.

The bases that make up a binding site are best described by a probability distribution, they are not a fixed set requiring an exact match.

![]() is the simplest of the two information measures in ev. Basically, it is the number of bits required to find one binding site out of set of binding sites in a genome of a certain length. For a genome of length

is the simplest of the two information measures in ev. Basically, it is the number of bits required to find one binding site out of set of binding sites in a genome of a certain length. For a genome of length ![]() with

with ![]() binding sites, this is

binding sites, this is ![]()

For example, consider a genome of 1000 base pairs containing 5 binding sites. The average distance between binding sites is 200 bases, so the information needed to find them is ![]() which is approximately 7.64 bits.

which is approximately 7.64 bits.

![]() is the amount of information in the binding site itself. There are two problems with computing

is the amount of information in the binding site itself. There are two problems with computing ![]() . The first is the definition of “information.” Schneider uses Shannon information, a clearly defined, well-respected metric with demonstrated utility in the study of biological systems.

. The first is the definition of “information.” Schneider uses Shannon information, a clearly defined, well-respected metric with demonstrated utility in the study of biological systems.

The second problem is that binding sites for the same protein don’t consist of exactly the same sequence of bases. Aligned sequences are frequently used to identify the bases that are most common at each location in the binding site, but they don’t tell the whole story. An aligned sequence that shows an A in the first position may reflect a set of actual sites of which 70% have A in the first position, 25% C, and 5% G. ![]() must take into account this distribution.

must take into account this distribution.

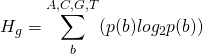

The Shannon uncertainty of a base in a binding site is:

(1)

where ![]() is the probability of a base

is the probability of a base ![]() at that location in the genome. This will be approximately 0.25, equal probability for all bases, for the initial, randomly generated genome. The initial uncertainty at a binding site is therefore:

at that location in the genome. This will be approximately 0.25, equal probability for all bases, for the initial, randomly generated genome. The initial uncertainty at a binding site is therefore:

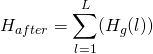

(2) ![]()

where ![]() is the width of the site.

is the width of the site.

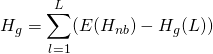

![]() , the increase in information, is then

, the increase in information, is then ![]() , where:

, where:

(3)

computed from the observed probabilities.

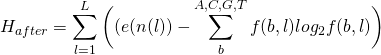

There is one additional complexity with these formulas. Because of the small sample size, an adjustment must be computed for ![]() :

:

(4)

or

(5)

measured in bits per site.

The math behind the small sample adjustment is explained in Appendix 1 of Schneider’s thesis. Approximate values for ![]() for binding site widths from 1 to 50 are available pre-computed by a program available on Schneider’s site:

for binding site widths from 1 to 50 are available pre-computed by a program available on Schneider’s site:

For a random sequence, ![]() will be near 0. This will evolve to

will be near 0. This will evolve to ![]() over an ev run.

over an ev run.

Schneider’s ev Implementation

Schneider’s implementation is a fairly standard genetic algorithm, with an interesting fitness function. The virtual genomes contain, by default, 256 potential binding sites. The genomes are composed of characters from an alphabet of four letters (A, C, G, and T). The default number of optimal binding sites, ![]() , is 16. The locations of these sites are randomly generated at the beginning of each run and remain the same throughout the run. Given this configuration,

, is 16. The locations of these sites are randomly generated at the beginning of each run and remain the same throughout the run. Given this configuration, ![]() , the amount of information required to identify one of these sites in a genome of length

, the amount of information required to identify one of these sites in a genome of length ![]() is

is ![]() which equals 4. Per Schneider’s Ph.D thesis,

which equals 4. Per Schneider’s Ph.D thesis, ![]() , the information in the binding site itself, should evolve to and remain at approximately this value during a run.

, the information in the binding site itself, should evolve to and remain at approximately this value during a run.

To determine the number of binding sites actually present, a portion of the genome codes for a recognizer as well as being part of the set of potential binding sites. This recognizer, which is subject to the same mutation and selection as the rest of the genome, is applied at each base to determine if that base is the start of a binding site. If a base is not correctly identified as the start of a binding site, the fitness of the genome is decreased by one. If a base is incorrectly identified as the start of a binding site, the fitness of the genome is also decreased by one. Schneider notes that changing this weighting may affect the rate at which ![]() converges to

converges to ![]() but not the final result.

but not the final result.

After all genomes are evaluated, the half with the lowest fitness are eliminated and the remaining are duplicated with mutation. Schneider uses a relatively small population size of 64.

The recognizer is encoded as a weight matrix of ![]() two’s-complement integers, where

two’s-complement integers, where ![]() is the length of a binding site (6 by default). Schneider notes that:

is the length of a binding site (6 by default). Schneider notes that:

At first it may seem that this is insufficient to simulate the complex processes of transcription, translation, protein folding and DNA sequence recognition found in cells. However the success of the simulation, as shown below, demonstrates that the form of the genetic apparatus does not affect the computed information measures. For information theorists and physicists this emergent mesoscopic property will come as no surprise because information theory is extremely general and does not depend on the physical mechanism. It applies equally well to telephone conversations, telegraph signals, music and molecular biology.

Since ev genomes consist only of A, C, G, and T, these need to be translated to integers for the weight matrix. Schneider uses the straightforward mapping of (A, C, G, T) to (00, 01, 10, 11). The default number of bases for each integer is ![]() . Given these settings, AAAAA encodes the value 0, AAAAC encodes 1, and TTTTT encodes -1 (by two’s-complement rules).

. Given these settings, AAAAA encodes the value 0, AAAAC encodes 1, and TTTTT encodes -1 (by two’s-complement rules).

When evaluating a genome, the first ![]() bases are read into the

bases are read into the ![]() weight matrix. The next

weight matrix. The next ![]() bases represent a threshold value that is used to determine whether or not the value returned by the recognizer is a binding site match. This is also a two’s-complement integer with the same mapping. The recognizer is then applied from the first base in the genome to the last that could possibly be the start of a binding site (given the binding site length).

bases represent a threshold value that is used to determine whether or not the value returned by the recognizer is a binding site match. This is also a two’s-complement integer with the same mapping. The recognizer is then applied from the first base in the genome to the last that could possibly be the start of a binding site (given the binding site length).

It’s worth re-emphasizing that the recognizer and the threshold are part of the genome containing the binding sites. The length of the full genome is therefore ![]() bases, with only the first

bases, with only the first ![]() bases being potential binding sites.

bases being potential binding sites.

The recognizer calculates a total value for the potential site starting at a given point by summing the values of the matching bases in the weight matrix. The weight matrix contains a value for each base at each position in the site, so for a binding site length of 7 and a potential binding site consisting of the bases GATTACA, the total value is:

w[0]['G'] + w[1]['A'] + w[2]['T'] + w[3]['T'] + w[4]['A'] + w[5]['C'] + w[6]['A']

If this value exceeds the threshold, the recognizer identifies the bases as a binding site.

This implementation of the recognizer is an interesting way of encapsulating the biological reality that binding sites don’t always consist of exactly the same sequence of bases. Schneider notes, though, that “the exact form of the recognition mechanism is immaterial because of the generality of information theory.”

Schneider’s Results

Using his default settings of:

- Genome length:

- Number of binding sites:

- Binding site length:

- Bases per integer:

Schneider found that:

When the program starts, the genomes all contain random sequence, and the information content of the binding sites,

, is close to zero. Remarkably, the cyclic mutation and selection process leads to an organism that makes no mistakes in only 704 generations (Fig. 2a). Although the sites can contain a maximum of

bits, the information content of the binding sites rises during this time until it oscillates around the predicted information content,

bits . . . .

The Creationist Response

30 years after the original implantation and 16 years after it was published, Intelligent Design Creationists (IDCists) are still attempting to refute ev and are still getting it wrong.

Dembski In 2001

In 2001, William Dembski claimed that ev does not demonstrate an information increase and further claimed that Schneider “smuggled in” information via his rule for handling ties in fitness. Schneider reviewed and rebutted the first claim and tested Dembski’s second claim, conclusively demonstrating it to be false.

Schneider wryly addresses this in the ev FAQ:

Does the Special Rule smuggle information into the ev program?

This claim, by William Dembski, is answered in the on-line paper Effect of Ties on the evolution of Information by the ev program. Basically, changing the rule still gives an information gain, so Dembski’s prediction was wrong.

Has Dembski ever acknowledged this error?

Not to my knowledge.

Don’t scientists admit their errors?

Generally, yes, by publishing a retraction explaining what happened.

Montanez, Ewert, Dembski, and Marks In 2010

Montanez, Ewert, Dembski, and Marks published A Vivisection of the ev Computer Organism: Identifying Sources of Active Information in the IDCist’s pseudo-science journal BIO-Complexity in 2010. Despite its title, the paper doesn’t demonstrate any understanding of the ev algorithm or what it demonstrates:

- The authors spend a significant portion of the paper discussing the efficiency of the ev algorithm. This is a red herring — nature is profligate and no biologist, including Schneider, claims that evolutionary mechanisms are the most efficient way of achieving the results observed.

- Related to the efficiency misdirection, the authors suggest alternative algorithms that have no biological relevance instead of addressing the actual algorithm used by ev.

- The authors do not use Shannon information, instead substituting their idiosyncratic “active information”, including dependencies on Dembski’s concept of “Conservation of Information” which has been debunked by Wesley Elsberry and Jeffrey Shallit in Information Theory, Evolutionary Computation, and Dembski’s “Complex Specified Information”, among others.

- The authors note that “A common source of active information is a software oracle”. By recognizing that an oracle enables evolutionary mechanisms to work in software, they are admitting that those same mechanisms can explain what we observe in biological systems because the real world environment is just such an oracle. The environment provides information about what works and what doesn’t by ensuring that organisms less suited to it will tend to leave fewer descendants.

- The authors repeatedly claim that the “perceptron” used as a recognizer makes the ev algorithm more efficient. Their attempted explanation of why this is the case completely ignores the actual implementation of ev. They seem so caught up in Schneider’s description of the recognizer as a perceptron that they miss the fact that it’s nothing more than a weight matrix that models the biological reality that a binding site is not a fixed set of bases.

Basically the paper is a rehash of concepts the authors have discussed in previous papers combined with the hope that some of it will be applicable to ev. None of it is.

Schneider soundly refuted the paper in Dissection of “A Vivisection of the ev Computer Organism: Identifying Sources of Active Information”. He succinctly summarized the utter failure of the authors to address the most important feature of ev:

They do not compute the information in the binding sites. So they didn’t evaluate the relevant information (

) at all.

In a response to that refutation, Marks concedes that “Regardless, while we may have different preferred techniques for measuring information, we agree that the ev genome does in fact gain information.”

After that damning admission, Marks still doubles down on his spurious claim that the “Hamming oracle” makes ev more efficient:

Schneider addresses the hamming oracle issue by assuming that nature provides a correct fitness function (a hamming function) that allows for positive selection in the direction of our target. He argues that this fitness is based on a

biologically sensible criteria: having functional DNA binding sites and not having extra ones.

But this describes a target; this is the desired goal of the simulation. The fitness function actually being used is a distance to this target. This distance makes efficient information extraction possible.

That’s not a target. It provides no details about what a solution would look like or how to reduce the distance measured, it simply indicates how far away a genome is from being a solution. In fact, it does less than that because it doesn’t provide any information about the difference between an existing recognizer and an ideal recognizer. It also says nothing at all about the relationship between ![]() and

and ![]() .

.

Even as he tries to salvage the tatters of his paper, Marks makes another concession:

Reaching that point requires a particular shape to the fitness landscape to guide evolution.

He admits again that evolution does work in certain environments. The real world is one of those.

Nothing in Marks’ response changes the accuracy of Schneider’s summary in his refutation:

Aside from their propensity to veer away from the actual biological situation, the main flaw in this paper is the apparent misunderstanding of what ev is doing, namely what information is being measured and that there are two different measures. The authors only worked with

and ignored

. They apparently didn’t compute information from the sequences. But it is the increase of

that is of primary importance to understand. Thanks to Chris Adami, we clearly understand that information gained in genomes reflects ‘information’ in the environment. I put environmental ‘information’ in quotes because it is not clear that information is meaningful when entirely outside the context of a living organism. An organism interprets its surroundings and that ‘information’ guides the evolution of its genome.

Ewert in 2014

Winston Ewert published Digital Irreducible Complexity: A Survey of Irreducible Complexity in Computer Simulations in 2014, again in the IDCists’ house journal BIO-Complexity. This paper constituted 25% of the output of that august publication in that year. Ewert reviewed Avida, ev, Dave Thomas’ Steiner Trees, a geometric algorithm by Suzanne Sadedin, and Adrian Thompson’s Digital Ears, attempting to demonstrate that none of them generated irreducible complexity.

Michael Behe defined “irreducible complexity” in his 1996 book Darwin’s Black Box:

By irreducibly complex I mean a single system composed of several well-matched, interacting parts that contribute to the basic function, wherein the removal of any one of the parts causes the system to effectively cease functioning. An irreducibly complex system cannot be produced directly (that is, by continuously improving the initial function, which continues to work by the same mechanism) by slight, successive modifications of a precursor system, because any precursor to an irreducibly complex system that is missing a part is by definition nonfunctional.

Dave Thomas has shredded Ewert’s discussion of Steiner Trees, demonstrating that Ewert addressed a much simpler problem (spanning trees) instead.

Richard B. Hoppe has similarly destroyed Ewert’s claims about Avida.

Schneider does explicitly claim that ev demonstrates the evolution of irreducible complexity:

The ev model can also be used to succinctly address two other creationist arguments. First, the recognizer gene and its binding sites co-evolve, so they become dependent on each other and destructive mutations in either immediately lead to elimination of the organism. This situation fits Behe’s definition of ‘irreducible complexity’ exactly . . . .

Ewert quotes this claim and attempts to refute it with:

It appears that Schneider has misunderstood the definition of irreducible complexity. Elimination of the organism would appear to refer to being killed by the model’s analogue to natural selection. Given destructive mutations, an organism will perform less well than its competitors and “die.” However, this is not what irreducible complexity is referring to by “effectively ceasing to function.” It is true that in death, an organism certainly ceases to function. However, Behe’s requirement is that:

If one removes a part of a clearly defined, irreducibly complex system, the system itself immediately and necessarily ceases to function.

The system must cease to function purely by virtue of the missing part, not by virtue of selection.

It appears that Ewert is the one with the misunderstanding here. If there is a destructive mutation in the genes that code for the recognizer, none of the binding sites will be recognized and, in the biological systems that ev models, the protein will not bind and the resulting capability will not be provided. It will “immediately and necessarily” cease to function. This makes the system irreducibly complex by Behe’s definition.

Binding sites are somewhat less brittle, simply because there are more of them. However, if there is a destructive mutation in one or more of the binding sites, the organism with that mutation will be less fit than others in the same population. In a real biological system, the function provided by the protein binding will be degraded at best and eliminated at worst. The organism will have effectively ceased to function. It is this lack of function that results in the genome being removed from the gene pool in the next generation.

Given that the recognizer and binding sites form a set of “well-matched, interacting parts that contribute to the basic function” and that “the removal of any one of the parts causes the system to effectively cease functioning”, ev meets Behe’s definition of an irreducibly complex system.

The IDCist Discovery Institute touted Ewert’s paper as evidence of “an Excellent Decade for Intelligent Design” in the ten years following the Dover trial. That case, of course, is the one that showed conclusively that ID is simply another variant of creationism and a transparent attempt to violate the separation of church and state in the United States. If Ewert’s paper is among the top achievements of the IDC movement in the past ten years then it’s clear that reality-based observers still have no reason to take any IDCist pretensions to scientific credibility seriously. The political threat posed by intelligent design and other variants of creationism is, unfortunately, still a significant problem.

An Alternative ev Implementation

I have implemented a variant of Dr. Schneider’s ev in order to reproduce his results and explore the impact of some alternative approaches. My version of ev uses the GA Engine I wrote to solve Dave Thomas’ Steiner Network design challenge. This engine operates on bit strings rather than the characters used by Dr. Schneider’s implementation.

As described in the GA engine documentation, applying the GA engine to a problem consists of following a few simple steps:

- Create a class to represent the characteristics of the problem

The problem class

ev-problemcontains the parameters for genome length ( ), number of binding sites (

), number of binding sites ( ), binding site length (

), binding site length ( ), and bases per integer (

), and bases per integer ( ).

). - Implement a method to create instances of the problem class

The

make-ev-problemfunction creates an instance ofev-problem. - Implement the required generic functions for the problem:

genome-length

The genome length is

, using two bits to encode each base pair.

, using two bits to encode each base pair.fitness

The fitness of a genome is the number of mistakes made by the recognizer, the total of missed and spurious binding sites.

fitness-comparator

This problem uses the

greater-comparatorprovided by the GA engine. - Implement a

terminatorfunctionThis problem uses the

generation-terminatorprovided by the GA engine, stopping after a specified number of generations. - Run

solve

Initial Results

In my implementation, Schneider’s default settings and selection mechanism are configured like this:

(defparameter *default-ev-problem*

(make-ev-problem 256 16 6 5))

(let* ((problem *default-ev-problem*)

(gene-pool (solve problem 64 (generation-terminator 3000)

:selection-method :truncation-selection

:mutation-count 1

:mutate-parents t

:interim-result-writer #'ev-interim-result-writer))

(best-genome (most-fit-genome gene-pool (fitness-comparator problem))))

(format t "~%Best = ~F~%Average = ~F~%~%"

(fitness problem best-genome)

(average-fitness problem gene-pool)))This creates an instance of the ev-problem with 256 potential binding sites, 16 actual binding sites, a binding site width of 6 bases, and 5 bases per integer. It then evolves this population for 3000 generations using truncation selection (taking the top 50% of each gene pool to seed the next generation), changing one base in each genome, including the parent genomes, per generation.

The results are identical to those reported by Schneider. Over ten runs, each with a different random seed, the population evolves to have at least one member with no mistakes within 533 to 2324 generations (the mean was 1243.6 with a standard deviation of 570.91). ![]() approaches

approaches ![]() throughout this time. As maximally fit genomes take over the population,

throughout this time. As maximally fit genomes take over the population, ![]() oscillates around

oscillates around ![]() .

.

While my implementation lacks the GUI provided by Schneider’s Java version, the ![]() values output by

values output by ev-interim-result-writer show a similar distribution.

Variations

There are many configuration dimensions that can be explored with ev. I tested a few, including the effect of population size, selection method, mutation rate, and some problem-specific parameters.

Population Size

Increasing the population size from 64 to 256 but leaving the rest of the settings the same reduces the number of generations required to produce a maximally fit genome to between 251 and 2255 with a mean of 962.23 and a standard deviation of 792.11. A population size of 1000 results in a range of 293 to 2247 generations with a lower mean (779.4) and standard deviation (689.68), at a higher computation cost.

Selection Method

Schneider’s ev implementation uses truncation selection, using the top 50% of a population to seed the next generation. Using tournament selection with a population of 250, a tournament size of 50, and a mutation rate of 0.5% results in a maximally fit individual arising within 311 to 4561 generations (with a mean of 2529.9 and standard deviation of 1509.01). Increasing the population size to 500 gives a range of 262 to 4143 with a mean of 1441.2 and standard deviation of 1091.95.

Adding crossover to tournament selection with the other parameters remaining the same does not seem to significantly change the convergence rate.

Changing the tournament selection to mutate the parents as well as the children of the next generation does, however, have a significant impact. Using the same population size of 500 and mutation rate of 0.5% but mutating the parents results in a maximally fit individual within 114 to 1455 generations, with a mean of 534.1 and a standard deviation of 412.01.

Roulette wheel selection took much longer to converge, probably due to the fact that a large percentage of random genomes have identical fitness because no binding sites, real or spurious, are matched. This makes the areas of the wheel nearly equal for all genomes in a population.

Mutation Rate

In the original ev, exactly one base of 261 in each genome is modified per generation. This explores the fitness space immediately adjacent to the genome and is essentially simple hill climbing. This mutation rate is approximately 0.2% when applied to a string of bases represented by two bits each.

Changing the mutation count to a mutation rate of 1% results in ev taking an order of magnitude more generations to produce a maximally fit individual. Rates of 0.5% and 0.2% result in convergence periods closer to those seen with a single mutation per genome, both with truncation and tournament selection, particularly with larger population sizes. As Schneider notes, this is only about ten times the mutation rate of HIV-1 reverse transcriptase.

Binding Site Overlap

By default, binding sites are selected to be separated by at least the binding site width. When this restriction is removed, surprisingly the number of generations required to produce the first maximally fit genome ranges does not change significantly from the non-overlapping case.

Alternative Implementation Results

Population size seems to have the largest impact on the number of generations required to reach equilibrium. Mutation rate has a smaller effect, but can prevent convergence when set too high. Tournament selection takes a bit longer to converge than truncation selection, but the two are within the same order of magnitude. Roulette selection does not work well for this problem.

Unlike the Steiner network and some other problems, crossover doesn’t increase the convergence rate. Mutating the parent genomes before adding them to the next generation’s gene pool does have a measurable impact.

Regardless of selection method, mutation rate, or other parameters, ![]() always evolves to and then oscillates around

always evolves to and then oscillates around ![]() .

.

Source Code

The code is available on GitHub. The required files are:

ga-package.lispga.lispexamples/ga-ev-package.lispexamples/ga-ev.lispexamples/load-ev.lisp

To run from the command line, make the examples directory your working directory and then call

ccl64 - -load load-ev.lisp`

if you’re using Clozure CL or

sbcl - -load load-ev.lisp`

if you’re using Steel Bank Common Lisp.

If you need a refresher on Common Lisp programming, Peter Seibel’s Practical Common Lisp is an excellent book.

Summary

In addition to being a good test case for evolutionary algorithms, ev is interesting because of its biological relevance. As Schneider points out in his Results section:

Although the sites can contain a maximum of

bits, the information content of the binding sites rises during this time until it oscillates around the predicted information content

bits, with

bits during the 1000 to 2000 generation interval.

With this, ev sticks a spoke in the tires of creationists who complain that GAs like Richard Dawkins’ weasel program only demonstrate “directed evolution”. There is nothing in the ev implementation that requires that ![]() evolve to

evolve to ![]() , yet it does every time.

, yet it does every time.

It’s well worth running the Java version of ev to see the recognizer, threshold, and binding sites all co-evolving. This is a clear answer to creationist objections about how “irreducibly complex” parts could evolve.

The common creationist argument from incredulity based on the complexity of cell biochemistry is also touched on by ev. Even with a genome where the recognizer and binding sites all overlap indiscriminately, a maximally fit recognizer evolves in a tiny population within a small number of generations.

Despite numerous attempts, Intelligent Design Creationists haven’t been able to refute any of Dr. Schneider’s claims or the evidence provided by ev. His history of responses to creationists is both amusing and devastating to his critics.

Unlike his IDCist critics, Schneider uses a clear, unambiguous, measurable definition of information and demonstrates that even the simplest known evolutionary mechanisms can increase it. Shannon information is produced randomly in the context of the environment but is preserved non-randomly by selection. Differential reproductive success does, therefore, generate information. As Schneider succinctly puts it:

Replication, mutation and selection are necessary and sufficient for information gain to occur.

This process is called evolution.

— Thomas D. Schneider

Please contact me by email if you have any questions, comments, or suggestions.

I supported my claim, Richie. Just because you cannot understand what I posted doesn’t mean anything to me. The fact that you cannot say how they do it tells me you don’t know anything about it.

The process ID uses that is the same as those other venues was laid out by Sir Isaac Newton in his four rules of scientific investigation. That means they all use the EF or something very, very similar to it. They have to eliminate necessity and chance and then find a pattern.

How compressible is “An endless, sinusoidal signal”? And how compressible is CSI?

How improbable is an endless sinusoidal wave given P(T|H)? And how improbable is CSI given the same conditional probability?

We can never know, which is why ID is a bust.

See reply 1.

But you’re avoiding the fact that you want something very uncomplicated to be a hallmark of design here contra to most ID conjecture.

LoL! Everything that we know says it is impossible for mother nature to produce an endless sinusoidal wave. That is why SETI uses it as a base signal for the artificial.

And anything highly improbable is also complex.

Well given that the universe will end, No. What type of wave is sunlight? Because that’s been going for a few billion years (or thousand if you’re a YEC)

Richie always ignores the context:

An endless, sinusoidal signal – a dead simple tone – is not complex; it’s artificial. Such a tone just doesn’t seem to be generated by natural astrophysical processes. In addition, and unlike other radio emissions produced by the cosmos, such a signal is devoid of the appendages and inefficiencies nature always seems to add — Shostak

Funny how IDers always turn to SETI despite the fact they could, if their claims were true, do similar design detection work right here on earth.

Yet, once again, it’s for someone else to do ID’s work for it.

LoL! @ OM- We have done the design work here on earth. And you don’t have anything to refute our design inference for biology.

I take it that has you very upset

keiths:

Patrick:

By “maximally fit”, do you mean “maximally fit given the degree of overlap in the binding sites for this particular run” or “as fit as it is possible for a genome to be”?

It would be interesting to see what would happen if instead of randomly choosing the locations of binding sites at the beginning of the run, you instead chose them manually to match the following pattern, where ‘B’ indicates a binding site and ‘N’ a non-binding site:

BBNBNNBNNNBNNNNB…

…with an L that is large enough to span the widest B[N]+B sequence.

I mean that it recognizes all of the binding sites and no spurious sites. Zero mistakes.

I tried what I think is a more difficult overlap, by setting the binding sites to be the first 16 bases using Schneider’s standard problem settings. I then ran it 10 times with a population of 1000, tournament selection, and a mutation rate of 0.2%. A maximally fit genome evolved in 595.4 generations on average (with a standard deviation of 259.4).

There is an example in the comments of

load-ev.lispshowing how to explicitly set overlapping binding sites.Well, first, they would calculate the Shannon Information content.

Patrick:

I was thinking that mine would be more difficult, by the following reasoning: both schemes have B’s at every possible distance from each other within the binding site length L, but mine has more N’s at those same distances relative to B’s.

To be maximally fit, a genotype’s recognizer must recognize all B’s while rejecting all N’s. The more the N’s “interfere” with the B’s (by being intermixed with them at the same distances that other B’s are), the harder the problem should be, as far as I can tell.

Or to put it somewhat differently, the span of the harder-to-satisfy portion of the genome is greater with the gapped approach than it is when the B’s are bunched together.

What did the weights look like for the maximally fit genotypes?

Good. I’ll play around with it this weekend if I have time.

First Larry Krauss gets accused of being a dishonest quote-miner and now Patrick gets accused of misrepresentation.

Ev Ever Again

Interesting that the man who had this to say here at TSZ:

should choose the safety of a comments-off environment to level all those accusations at the participants in this thread.

Draw your own conclusions re intellectual courage…

Indeed. Winnie the Creationist of Very Little Brain seems also to have Very Small Balls [*]. I’ll note also that I sent him an email, as suggested by someone in the comments, when I made the original post. I received no such courtesy in return.

[*] Although I have respect for Betty White’s viewpoint.

should choose the safety of a comments-off environment to level all those accusations at the participants in this thread.

Draw your own conclusions re intellectual courage…

Indeed.Winnie the Creationist of Very Little Brain seems also to have Very Small Balls [*].I’ll note also that I sent him an email, as suggested by someone in the comments, when I made the original post.I received no such courtesy in return.

[*] Although I have respect for Betty White’s viewpoint.

LoL! By that “logic” evolutionists don’t have any balls and they are all cowards.

Nice own goal…

A kick in the balls is no joke, I don’t care how small they are.

Granny Spice has a post with no comments here:

Leading to the article in the place where there are comments are not allowed here

http://www.evolutionnews.org/2016/03/ev_ever_again102716.html

It seems like this is the place for discussion (Winston).

And he’d be very welcome.

I’m looking for Dr. Ewert’s responses to Patrick. Where can I find them?

PaV,

Not here, sadly. Instead of participating in a discussion, Ewert prefers to post monologues on a site where comments are disabled.

Click here for the latest monologue.

While I wait for a response to my previous post, let me add something to this discussion, if I may.

The results of ev show a gain in information using Shannon’s definition of information. So let’s just let this be a given.

The more important part of all of this revolves around two questions: (1) is this ‘information’ generated randomly; and, the more important, and fundamental question, (2) does the ev program represent what happens in nature.

I’ll leave it to Dembski and Ewert to argue the first question. And I agree with 100%.

But, let’s move on to the important second question: has Schneider successfully capture, in his simulation, the way that nature works?

I believe the answer to that question is: no.

There are a number of problems. I’ll restrict myself to a few:

(1) The most obvious is the mutation rate. Schneider compares the ev mutation rate to the HIV-1 virus, and notes that it is ten times faster!

Well, HIV-1 is a virus known to mutate about as quickly as anything we know. And the ev program is evolving even faster! The “genomic” mutation rate is about 0.4%. The human genome mutation rate is more than 10,000 times slower. Should we just ignore this?

(2) Immediately after saying his model “matches” biology, in the very next sentence he writes: “Because half of the population always survives each selection round in the evolutionary simulation presented here, the population cannot die out, and, there is no lethal level of incompetence. While this may not be representative of all biological systems, since extinctions and threshold effects do occur, it is representative of the situation in which a functional species can survive without a particular genetic control system but which would do better to gain control ab initio.

This almost contradicts him.

But the distinction that he sees as ‘saving’ him, actually undermines his program.

That is:

(3) Schneider invokes “gene duplication”. He writes: “Given that gene duplication is common and that transcription and translation are part of the housekeeping functions of all cells, the program simulates the process of evolution of new binding sites from scratch. The exact mechanisms of translation and locating binding sites are irrelevant.”

Schneider doesn’t expound here on his point, but he is basically saying that via gene duplication, and because of the presence of cell machinery that is simply a given for each cell/organism, and because of the nature (and location–which he doesn’t speak much about, but does have relevance here, though I’m not going to address that) of the incipient binding site on the RNA/DNA, this “process of evolution of new binding sites” falls outside of ‘purifying selection.’ IOW, as he states, the organism/cell doesn’t need this ‘control’; it will simply operate ‘better’ with it. So, this ‘process’ is effectively invisible to natural selection.

But, wait a second, we have a problem here:

(4) Schneider himself tells us that if switches off “selection,” then the information gains disappear!! So, he is invoking a process that is invisible to “selection,” but then his notion of “selection” (which, again, is of its own problematic) becomes critical for the information to arise (as my own runs on his ev program have aptly demonstrated), and to remain.

This is a serious problem.

But the problem has a deeper basis:

(5) Schneider uses a “weight” matrix in his model. After each run, all of the binding sites are evaluated algebraically using this matrix and then assigned a value. Then the value of these sites is added up given, if you will, a total score for that particular genome.

Here’s the even more serious problem(s). First, the “score” can only be arrived at by knowing what the target sequence is. This is almost exactly like Dawkin’s “Me Think It Is A Weasel” example where arriving at the target sequence completely randomly is near impossible, but can be reached somewhat easily if every “correct” character is kept. The obvious difference here is ONLY that the “target sequence” can change—though it won’t change rapidly.

For example, if one can “find” the correct binding sites on average in 750 generations or so, and, if there is only ONE mutation per generations, this means the binding sites don’t quickly make dramatic changes, an effect that is incredibly heightened by Schneider’s decision to “eliminate” half of the genomes in each generation, which in short order introduces an almost constant set of binding sites.

Patrick writes above that when he increased the mutation rate for the program it took “longer” to optimize the binding sites: in real life we would expect the opposite for a randomly produced effect.

All this means:

(6) The way the ev program works is by having “selection” able to see not just random processes that produce no fitness effects, but, even more increduously, “selection” can “see” the steps on the way to a fitness effect. That’s what the “weight” matrix does: it takes each step of the way, evaluates it, selects for it, eliminates all the bad genomes, and then moves on.

It is no small wonder, then, that “new information” is produced. But this is not what happens in nature. It is simply not a realistic model of life.

So, let me conclude with Dembski’s assessment of ev, which captures what I’m saying here:

(My bolding)

PaV:

You got one. Read it and follow the link.

keiths,

It took me 30 minutes to write the last post. When I started, I had just posted the request.

I’ve looked at the post you’ve linked to. That, in fact, is what led me here.

PaV:

You asked where you could find Ewert’s responses to Patrick:

The link I gave you answers your question. It shows where you can find Ewert’s responses to Patrick. Ewert’s post is a response to this thread.

Jesus, PaV. If you ask a question, don’t be surprised when someone answers it.

I thought it made sense to feature this OP in view of Winston Ewert’s commenting on it elsewhere.

keiths,

I understood your prior post. You did not understand mine. Yes, of course, Ewert was answering Patrick at ENV. No need to be vexed.

PaV,

Apparently you didn’t realize that when you asked:

Again, why be surprised when you ask a question and someone gives you the correct answer?

Thanks, Alan. I’m putting together a response to Winston, but work has priority. One of the points I’m discussing is that search is a poor metaphor for what evolution does. Joe Felsenstein’s recent comments give me confidence I’m on the right track.

Wouldn’t the question of interest be whether search is a poor metaphor for what ev does?

Perhaps he was startled by where the correct answer came from.

And why get so bent out of shape when someone is writing a post while you’re responding to his prior post? You’ve made a mountain out of molehill. I’ve tried to explain in a temperate tone. Apparently that doesn’t work. Let’s just drop this niggling point.

You’re correct, Mung. I was quite surprised where Ewert’s reply came from.

This mRNA is then picked up by a ribosome (which also attaches to a binding site) that transcribes a protein from it.

Typo: you mean “translates”. Transcription is using DNA as a template for RNA.

Patrick, you may be a shitty moderator, but this is a hell of an OP. Well done!

Thank you, I think.

Basically, it is the number of bits required to find one binding site out of set of binding sites in a genome of a certain length.

I guess it finds binding sites without searching for them. Somehow.

The how is not a secret.

Relax, PaV. You got confused and asked a silly question. I answered it anyway.

Now you know where to find Ewert’s response to Patrick.

walto:

walto,

You may be a crappy commenter most of the time, but your assessment of Patrick’s OP is spot on. Good job.

Excellent de-escalation! 😉

ETA not referring to Keiths’ comments to PaV, obvioulsy. 😉

Thank you, I think.

My job was a lot easier than Patrick’s though. Both OPs–at least when they’re substantial–and moderating are harder than commenting.

You can simply inspect the code, either mine or Dr. Schneider’s, to see that the only changes to the genomes from one population to the next are random. Information is generated randomly but preserved non-randomly, just as in biological evolution.

What problem do you think this poses? Try decreasing the mutation rate. What you’ll find is that, given the same small population size, it will take more generations for an optimal individual to appear. Now try decreasing the mutation rate but significantly increasing the population size. You’ll see the number of generations required for appearance of an optimal individual to go down.

Both the ev genome and the population size are extremely small compared to biological genomes and populations. It’s not surprising that a higher mutation rate is required to observe evolution within a reasonable number of CPU cycles. That doesn’t mean that less efficient settings won’t work.

There is no contradiction in what you quoted. He’s merely pointing out where ev models biology and where it does not.

Schneider’s use of truncation selection is also not an issue. I was able to reproduce his results using tournament selection, with a variety of tournament pool sizes and mutation rates.

Now here is a contradiction, in your final sentence. Schneider points out that one known evolutionary mechanism that results in new functionality is gene duplication followed by mutation. He notes, in the excerpt you provided, that while an organism does not need the new functionality (if it did it wouldn’t be viable), it operates better with it.

Operating better is a selectable feature. Your claim that it is “invisible to natural selection” does not follow from what Schneider wrote.

It’s not a problem at all. Improvements to function within a particular environment are selectable.

You misunderstand the implementation of ev. The weight matrix, the threshold for recognizing a binding site, and the multiple (sixteen by default) binding sites are all part of the genome and all are subject to mutation. Using Schneider’s default settings, there are 256 potential binding sites of width 6 yielding a genome length of 261. The weight matrix consists of 24 integers encoded by 5 bases each for a total of 120 bases. The threshold consists of another 5 bases. That means that almost 48% of the genome encodes the recognition mechanism.

The number of bases used to encode binding sites is 96. Plus, these binding sites overlap with the recognizer and with each other. Some mutations may impact the recognizer and three binding sites.

Given that, it’s obvious that your claim that the “target sequence” (which doesn’t exist in ev) “won’t change rapidly” is false. It will change nearly half the time a mutation takes place.

As already noted, the specific selection mechanism is immaterial. Evolution still occurs.

The binding sites are also not “almost constant”. The whole point of using a weight matrix is to model the biological fact that binding sites do not consist of a set sequence of nucleotides. If you run Schneider’s Java implementation you will see the range of bases in each binding site.

That doesn’t follow. In both biological and software evolution there are mutation rates that are too high to result in viable populations. Consider the impact of high radiation levels, for just one biological example.

As noted above, your claim that the random processes in ev “produce no fitness effects” is incorrect. The rest of your paragraph depends on that faulty assumption.

Differential reproductive success based on heritable characteristics is exactly what we observe in life.

The fitness function is analogous to the environment in biological evolution. If that “smuggles in” information, then so does the environment.

Dembski appears to be complaining that ev models biological evolution and demonstrates that simple, known evolutionary mechanisms result in measurable information gain. I can see how that’s a problem for Dembski’s religious beleifs, but it’s hardly an argument against what ev demonstrates.

To summarize:

1) The mutation rate discussion is a red herring.

2) The selection method used does not change the results.

3) The improvements provided by binding sites model selectable functionality in biological organisms.

4) There is no target sequence.

5) The recognizer does mutate as much as the rest of the genome.

6) Binding sites are not “almost constant”.

Intelligent design creationists should really write some code and run some tests before making assertions.

The bottom line is that ev demonstrates that simple evolutionary mechanisms can and do generate information in populations of genomes.

I also note that no critic of ev has yet addressed the interesting result of Rsequence evolving to match Rfrequency. That suggests a fundamental lack of understanding of ev.

That depends on your definition of “search”. Like “information”, that seems to be one of the words intelligent design creationists appreciate for its equivocation potential. “It’s a search, huh? Then there must be a target! And a searcher!”

What’s really going on in ev is that the recognizer, including the threshold, and the binding sites are co-evolving. There is no explicit target and there is no “oracle” that provides information on how to get closer to that non-existent target. There’s just a number of binding sites and some simple evolutionary mechanisms that stochastically preserve information that is randomly generated from that simulated environment.

Plus there’s the whole Rsequence evolving to Rfrequency thing, which is really cool.

Yes, “the genome” is designed. 🙂

EA 101

If no target sequence exists in ev, as you claim, then how can it be the case that “It will change nearly half the time a mutation takes place.”?

It would really help if you skeptics would define what you mean by ‘target’ and ‘search.’ Do you agree that a target can be implicit? That a target does not have to be explicit?

If so, saying that there is no explicit target really tells us only that there is no explicit target. It does not tell us that there are no targets.

Thank you for encouraging me to be more pedantic:

Given that, it’s obvious that your claim that the “target sequence” (which doesn’t exist in ev) “won’t change rapidly” is false. The recognizer, that you are incorrectly calling a target sequence, will change nearly half the time a mutation takes place.

Evolution doesn’t have a target and search is a terrible metaphor for what evolution does. I’m only using those terms because I’m responding to PaV (and DEM).

I’ve provided my code and links to Dr. Schneider’s. Please point to the target, either explicit or implicit.