My problem with the IDists’ 500 coins question (if you saw 500 coins lying heads up, would you reject the hypothesis that they were fair coins, and had been fairly tossed?) is not that there is anything wrong with concluding that they were not. Indeed, faced with just 50 coins lying heads up, I’d reject that hypothesis with a great deal of confidence.

It’s the inference from that answer of mine is that if, as a “Darwinist” I am prepared to accept that a pattern can be indicative of something other than “chance” (exemplified by a fairly tossed fair coin) then I must logically also sign on to the idea that an Intelligent Agent (as the alternative to “Chance”) must inferrable from such a pattern.

This, I suggest, is profoundly fallacious.

First of all, it assumes that “Chance” is the “null hypothesis” here. It isn’t. Sure, the null hypothesis (fair coins, fairly tossed) is rejected, and, sure, the hypothesized process (fair coins, fairly tossed) is a stochastic process – in other words, the result of any one toss is unknowable before hand (by definition, otherwise it wouldn’t be “fair”), and both the outcome sequence of 500 tosses and the proportion of heads in the outcome sequence is also unknown. What we do know, however, because of the properties of the fair-coin-fairly-tossed process, is the probability distribution, not only of the proportions of heads that the outcome sequence will have, but also of the distribution of runs-of-heads (or tails, but to keep things simple, I’ll stick with heads).

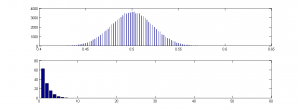

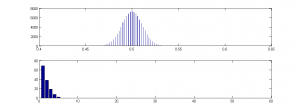

And in fact, I simulated a series of 100,000 such runs (I didn’t go up to the canonical 2^500 runs, for obvious reasons), using MatLab, and here is the outcome:

As you can see from the top plot, the distribution is a beautiful bell curve, and in none of the 100,000 runs do I get anything near even as low as 40% Heads or higher than 60% Heads.

As you can see from the top plot, the distribution is a beautiful bell curve, and in none of the 100,000 runs do I get anything near even as low as 40% Heads or higher than 60% Heads.

Moreover, I also plotted the average length of runs-of-heads – the average is just over 2.5, and the maximum is less than 10, and the frequency distribution is a lovely descending curve (lower plot).

If therefore, I were to be shown a sequence of 500 Heads and Tails, in which the proportion of Heads was:

- less than, say 40%, OR

- greater than, say 60%, OR

- the average length runs-of-heads was a lot more than 2.5, OR

- the distribution of the proportions was not a nice bell curve, OR

- the distribution of the lengths of runs-of-heads was not a nice descending Poisson like the one in lower plot,

I would also reject the null hypothesis that the process that generated the sequence was “fair coins, fairly tossed”. For example:

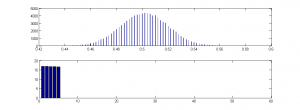

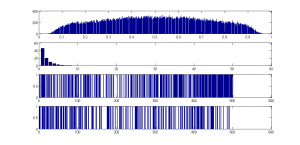

This was another simulation. As you can see, the bell curve is pretty well identical to the first, and the proportions of heads are just as we’d expect from fair coins, fairly tossed – but can we conclude it was the result of “fair coins, fairly tossed”? Well, no. Because look at the lower plot – the mean length of runs of heads is 2.5, as before, but the distribution is very odd. There are no runs of heads longer than 5, and all lengths are of pretty well equal frequency. Here is one of these runs, where 1 stands for Heads and 0 stands for tails:

This was another simulation. As you can see, the bell curve is pretty well identical to the first, and the proportions of heads are just as we’d expect from fair coins, fairly tossed – but can we conclude it was the result of “fair coins, fairly tossed”? Well, no. Because look at the lower plot – the mean length of runs of heads is 2.5, as before, but the distribution is very odd. There are no runs of heads longer than 5, and all lengths are of pretty well equal frequency. Here is one of these runs, where 1 stands for Heads and 0 stands for tails:

1 0 1 1 1 0 0 1 1 1 1 1 0 0 0 0 0 1 1 1 1 0 0 0 1 1 1 1 0 0 0 0 1 1 1 1 1 0 0 0 0 1 1 1 1 1 0 1 1 1 1 0 0 0 0 1 1 1 1 0 0 1 1 1 1 1 0 0 0 1 1 1 1 0 0 0 1 1 1 1 0 0 0 1 0 0 0 0 0 1 1 1 1 1 0 1 1 1 1 0 0 0 1 1 1 1 0 0 0 0 0 1 1 0 1 1 1 1 1 0 0 0 0 1 0 1 1 0 0 0 0 0 1 1 0 0 0 0 1 1 1 1 1 0 0 1 1 1 1 0 0 0 1 1 0 0 0 1 1 0 0 1 1 0 1 1 1 1 1 0 1 1 0 0 0 1 1 1 1 0 1 1 1 1 1 0 0 0 0 0 1 0 1 0 0 1 1 1 1 0 1 1 1 1 0 0 0 0 1 1 0 0 0 0 0 1 1 0 0 0 1 0 1 1 1 1 0 0 0 0 0 1 1 1 0 0 0 0 1 1 1 1 0 0 0 0 1 1 1 1 0 1 1 1 0 0 0 0 1 1 1 1 0 1 1 1 1 1 0 0 0 0 0 1 1 1 0 0 0 0 1 1 0 0 0 0 1 1 0 1 0 0 0 1 1 1 0 0 0 0 0 1 0 0 0 0 1 1 1 1 0 0 0 0 1 1 1 1 0 1 1 1 1 1 0 1 1 1 1 0 0 0 0 0 1 1 1 1 0 0 0 0 1 1 1 0 0 0 1 1 1 0 0 0 0 1 0 0 0 1 1 1 1 1 0 0 0 0 0 1 1 0 0 0 1 1 0 0 0 0 1 1 1 1 1 0 1 0 0 1 1 0 0 0 1 1 0 0 0 0 1 1 0 0 0 1 1 1 0 0 1 1 1 1 0 0 0 0 0 1 1 0 0 1 1 1 1 1 0 0 0 0 1 1 1 0 1 1 1 0 0 0 0 1 1 1 1 0 0 0 0 1 1 0 0 0 1 1 1 0 0 0 0 1 1 1 1 0 0 1 1 1 0 0 1 0 0 0 0 1 1 1 0 0 0 0 0 1 1 1 1 0 0 1 1 1 1 0 0 0

Would you detect, on looking at it, that it was not the result of “fair coins fairly tossed”? I’d say at first glance, it all looks pretty reasonable. Nor does it conform to any fancy number, like pi in binary. I defy anyone to find a pattern in that run. The reason I so defy you is that it was actually generated by a random process. I had no idea what the sequence was going to be before it was generated, and I’d generated another 99,999 of them before the loop finished. It is the result of a stochastic process, just as the first set were, but this time, the process was different. For this series, instead of randomly choosing the next outcome from an equiprobable “Heads” or “Tails” I randomly selected the length the next run of each toss-type from the values 1 to 5, with an equal probability of each length. So I might get 3 Heads, 2 Tails, 5 Heads, 1 Tail, etc. This means that I got far more runs of 5 Heads than I did the first time, but far fewer (infinitely fewer in fact!) runs of 6 Heads! So ironically, the lack of very long runs of Heads is the very clue that tells you that this series is not the result of the process “fair coins, fairly coins”.

But it IS a “chance” process, in the sense that no intelligent agent is doing the selecting, although in intelligent agent is designing the process itself – but then that is also true of the coin toss.

Now, how about this one?

Prizes for spotting the stochastic process that generated the series!

The serious point here is that by rejecting a the null of a specific stochastic process (fair coins, fairly tossed) we are a) NOT rejecting “chance” (because there are a vast number of possible stochastic processes). “Chance” is not the null; “fair coins, fairly tossed” is.

However, the second fallacy in the “500 coins” story, is that not only are we not rejecting “chance” when we reject “fair coins, fairly tossed”) but nor are we rejecting the only alternative to “Intelligently designed”. We are simply rejecting one specific stochastic process. Many natural processes are stochastic, and the outcomes of some have bell-curve probability distributions, and of others poisson distributions, but still others, neither. For example many natural stochastic processes are homeostatic – the more extreme some parameter becomes, the more likely is the next state to be closer to the mean.

The third fallacy is that there is something magical about “500 bits”. There isn’t. Sure if a p value for data under some null is less than 2^-500 we can reject that null, but if physicists are happy with 5 sigma, so am I, and 5 sigma is only about 2^-23 IIRC (it’s too small for my computer to calculate).

And fourthly, the 500 bits is a phantom anyway. Seth Lloyd computed it as the information capacity of the observable universe, which isn’t the same as the number of possible times you can toss a coin, and in any case, why limit what can happen to the “observable universe”? Do IDers really think that we just happen to be at the dead centre of all that exists? Are they covert geocentrists?

Lastly, I repeat: chance is not a cause. Sure we can use the word informally as in “it was just one of those chance things….” or even “we retained the null, and so can attribute the observed apparent effects to chance…” but only informally, and in those circumstances, “chance” is a stand-in for “things we could not know and did not plan”. If we actually want to falsify some null hypothesis, we need to be far more specific – if we are proposing some stochastic process, we need to put specific parameters on that process, and even then, chance is not the bit that is doing the causing – chance is the part we don’t know, just as I didn’t know when I ran my MatLab script what the outcome of any run would be. The part I DID know was the probability distribution – because I specified the process. When a coin is tossed, it does not fall Heads because of “chance”, but because the toss in question was one that led, by a process of Newtonian mechanics, to the outcome “Heads”. What was “chance” about it is that the tosser didn’t know which, of all possible toss-types, she’d picked. So the selection process was blind, just as mine is in all the above examples.

In other words it was non-intentional. That doesn’t mean it was not designed by an intelligent agent, but nor does it mean that it was.

And if I now choose one of those “chance” coin-toss sequences my script generated, and copy-paste it below, then it isn’t a “chance” sequence any more, is it? Not unless Microsoft has messed up (Heads=”true”, Tails=”false”):

true false true false false false false true true false true true false true true true false true false true true true true true false true false false false true true true true true true true true true false false false true true false true true false false false true false false true true false false false true false true false true true true true true true false false true false true true true true true true false true true true false true false false false false false false false false false false false false false false true false false true false true true true false true true true false true true false false true true true true true false true false true false false true true false true true true true true true true false false true false false true false false true true false true true true true false false true false false false false true false false true true false false true false true true false true false true true true true true true true true true true true true true false true false false true false false false true false false true true true false false true true true false true true true false false false true false true false false true false true false true true false true false true false true false false true true true false true true false false false false true false false true false true true true true true false true true true false false false true false true false true true true false false false false false false false true true false true false true false true true false false false true false true true false true false true true true true false true false false false false true false true true true true false false true false true true true true false true true false false true false true false true true true true true false false false true true true false false false true true true false true false true true true true true true true false false true false true false false true true true false true false true true false false true true true false true true false false false true false false false false true false false true true true true false false true false false true true true true false true false false true false true true false false true true false false true true false true false true true false false false false false false false true false true true false false false true true true false false false true false true false false false true true false true false true true true true false true false false false true false true true true false false false false true false true true false true true true false true true false true false false true false true false true true false false true false true false true false false true true false false

I specified it. But you can’t tell that by looking. You have to ask me.

ETA: if you double click on the images you get a clear version.

ETA2: Here’s another one – any guesses as to the process (again entirely stochastic)? Would you reject the null of “fair coins, fairly tossed”?

0 1 0 1 0 1 0 1 0 0 1 1 1 1 1 0 1 0 1 1 1 1 0 0 0 1 0 1 1 1 0 1 0 0 0 1 1 0 0 0 1 1 0 0 0 1 0 1 1 0 1 0 0 1 1 0 1 1 0 1 1 0 1 0 1 0 0 1 1 0 0 1 0 0 1 0 0 0 1 1 0 1 1 0 0 0 1 1 1 0 1 0 0 0 1 0 1 1 0 1 1 0 1 1 0 0 0 1 1 1 1 0 0 0 0 0 1 1 0 0 1 0 1 1 1 1 0 0 1 1 0 1 1 0 0 1 0 1 1 1 1 0 0 0 0 1 1 1 1 0 0 0 1 1 1 1 0 1 0 0 0 1 0 1 0 0 1 1 1 0 0 0 0 1 1 0 0 1 0 1 0 1 1 0 0 1 1 1 0 1 0 0 0 1 1 0 1 0 1 0 0 1 0 1 1 1 0 0 1 0 1 0 1 0 0 1 0 1 1 0 0 0 0 0 0 1 1 1 0 0 0 0 1 1 1 1 1 0 0 0 1 1 1 0 1 0 0 0 0 1 1 1 0 1 0 0 1 0 1 1 0 0 0 1 1 0 0 1 0 0 0 1 1 1 0 0 1 0 0 1 1 1 1 0 0 0 0 1 1 0 1 0 0 0 1 0 1 1 1 0 0 1 0 0 0 1 0 1 1 0 1 0 1 1 0 1 1 1 0 1 0 1 0 0 0 0 1 1 1 1 1 0 1 0 1 1 0 0 1 1 0 1 1 1 0 1 1 0 0 1 1 0 1 0 1 0 1 0 0 0 1 0 1 0 1 1 1 1 0 0 1 0 1 0 1 1 1 0 1 0 0 1 0 0 1 1 1 0 0 1 1 0 0 1 0 0 0 1 0 0 1 0 1 0 1 1 0 0 0 0 1 1 0 0 0 1 0 1 1 1 0 1 0 1 0 0 1 1 0 1 1 0 0 0 1 0 1 1 0 0 1 1 0 1 0 0 1 0 0 1 1 1 0 1 0 0 1 0 1 0 1 0 1 1 0 1 0 1 1 0 0 1 1 1 0 1 0 0 0 0 1 0 1 1 1 1 0 1 0 0 0 1 1 1 0 0 1 1 0 1

Barry? Sal? William?

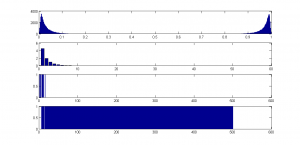

ETA3: And here’s another version:

The top plot is the distribution of proportions of Heads.

The second plot is the distribution of runs of Heads

The bottom two plots represent two runs; blue bars represent Heads.

What is the algorithm? Again, it’s completely stochastic.

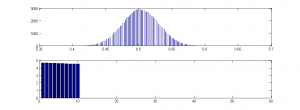

And one final one:

which I think is pretty awesome! Check out that bimodality!

which I think is pretty awesome! Check out that bimodality!

Homochirality here we come!!!

No answer. Another darwinist lost the chance to get out of my list.

That’s not what I said or implied. My problem with GA’s is not that they exist in designed environments, but that the GA itself is designed with pre-loaded information about the target space. IOW, the GA “knows” what kind of problem – at least in general – it is attempting to solve.

Your GA from long ago, where you culled results below a certain threshold of what result you were attempting to achieve, had the same problem. Unless you can tell with some sort of specificity (1) what problem natural selection is attempting to solve, and (2) how we can vet the results, your implication that designed GA’s with built-in target spaces (built-in by means of “what to cull”).

Natural selection is a result, not a goal. Nature doesn’t have goals; GAs do, and they are programmed in. Try programming a useful GA where your only criteria is “fitness” and no, you do not get to specify what “fitness” means, or what it is in relation to. Unless, of course, you can tell me what sets of characteristics comprise evolutionary “fitness”?

I can just see you guys programming a GA from an actual darwinian mindset:

Darwinist: “I need a GA that culls losers that are not fit below a threshold so we can find the most fit.”

Programmer: “Okay, no problem. What’s the target criteria?”

Darwinist: “The most fit.”

Programmer: “But, what does “fit” mean in this context? What are we looking for?”

Darwinist. Just general fitness. It might be any number of things. It depends on the situation.

Programmer. “Uhhhh .. okay. What are the culling parameters?”

Darwinist: “Those that are below 20% of target fitness.”

Programmer. “Jesus mother mary of ….. Okay. Well …errr .. what should my original population be comprised of?

Darwinist: Self-replicating virtual organisms.

Programmer: “No shit, Sherlock. I mean, uhh, what kind of additional characteristics am I putting in the original population? What are we beginning with? Geometric forms? Are we solving a geometry problem? Stock market information? Are we trying to find a better spider program for investment companies? Should I start with some kind of tolerance and design information? Are we attempting to solve an engineering problem?

Darwinist: “It could be any of those things, or any host of other things we need solved. We don’t really know.”

Programmer: &^%

#%% )(*%& !!!!!!!

____________________________________________

What makes GAs work is not the process, but the information about the target space that is provided in the design of the GA. Without the information about the target space guiding them, GAs can do nothing of any value.

The GA designers provide the environmental information; they provide the initial structure and sets of values for the virtual organisms; they provide sets of rules for breeding, resource use and culling all with a specific target space in mind. Without that target space information being built into the entire system, nothing useful will be generated.

The mechanism is useless without target space information.

Which doesn’t alter the fact one bit that the evolutionary process modeled creates novel information and novel functions. Doesn’t matter what the goal is.

We can. Evolutionary fitness is the ability to survive and reproduce in your current local environment. The current local environment supplies the set of parameters necessary to consider. That includes climate, number and types of predators, availability of food and water, etc.

The current local environment supplies the information about the target space.

We’ll add genetic algorithms to the list of topics WJM doesn’t understand.

Can you quote where a Darwinist said this, please, Blas?

In the real world the current local environment supplies the information about the target space. The evolving population already has its own ‘rules’ for breeding, resource use and culling.

Another FAIL for WJM.

What information about the target space does the environment provide? Where did that information come from?

If those rules do not have information about the target space in them (meaning, allow for the successful acquisition of the target space through those rules), the target cannot be acquired. Assuming the information already exists in the population doesn’t change the fact that information about the target must exist in the population in order for it to acquire the target.

For example, in a designed GA, unless the population and the environment has in it highly coordinated information about “flight”, a flightless population of virtual organisms will not be able to achieve flight – simply because that potential wasn’t built into the structure of the GA. The potential has to be built in to the system from the get-go, and one must begin with a population that has the eventual capacity to achieve it.

Your assumption that the information necessary to achieve evolutionary successes already resides in the population and the environment changes nothing; where did the information about the target, which must be highly coordinated between environment and organism, come from?

Have you heard of the peppered moth, William? When industrial pollution in England was strong, the environment favored darker moths. I am sure the name should ring the bell.

William,

Nature is “pre-loaded” with the information that polar bears are better off with white coats than with black ones. If nature is biased toward some phenotypes and away from others, how is it a “problem” when a GA is biased in the same way?

Don´t you recorecognize your own words?

“So can we please jettison this canard that “Darwinists” propose chance either as as an explanation for the complexity of life”

OK, thanks, but in that case you are still confused about what a GA is and how what it does maps on to evolutionary theory as proposed by Darwin, as I will try to explain below:

Yes, I can tell you the problem “natural selection” is “attempting” to “solve”. BTW, the scare quotes are intentional – “natural selection” is not “attempting” anything. Nor is it a “result”. It is merely a fanciful way of describing (by analogy with selective breeding by humans) the virtually inevitable process by which variants that tend to reproduce better will tend to be reproduced more often. The only reason the “tend” is in there is that occasionally some utterly unrelated catastrophe will wipe out the most awesome reproducer, despite its best “efforts” to reproduce.

But with that very great caveat: “natural selection” is “attempting” to “solve” the “problems” presented to a population in its “efforts” to to persist within that environment. In a GA, the “environment” is designed by the programmer, usually (occasionally, a programmer will design a GA in which the environment itself randomly presents changing resources and threats, mimicking in nature). Within that environment, the virtual organisms evolve to utilise the resources and avoid the threats. If the goal of the human designer is to find a solution to a problem she actually wants solving (in my case: a way of distinguishing the brain patterns of someone likely to recover from schizophrenia untreated from the brain of someone likely to require medication, for instance) then she simply designs the environment so that that is the problem the virtual organism has to solve in order to reproduce successfully. She then gets the organism’s solution as a useful byproduct. But we don’t have to do it that way – we can observe, for example, the way the albatross lineage has “solved” the problem of superb gliding and use its optimised wing configuration to guide our glider designs. In that instance, the “problem” was set by “nature”, but as it happened to coincide with one we wanted to solve too, we can use it, without having to design a specialised virtual environment and a set of virtual organism.

As for your second question: the way we “vet” the results of a GA depends on what we want to know. If we simply want to know (as with AVIDA) whether something evolved that could make use of our artificial environment in which a the exercise of a particular ability provided virtual “food” for the organism, all we have to do is to see whether an organism with that ability evolved. If we want to see what its “solution” was (how it was able to do what it could do), then we look at the solution itself. For example, if I run a classifier finding GA, I can look at the evolved classifying equations, and see how simple they are, for instance. Indeed with the one I use (I forget who recommended it! Someone here! It is awesome!), simpler equations do better in the “virtual environment” than complex ones, so as well as “reproducing” better if they classify better, they also “reproduce” better if they are simpler. And as what I want is a simple equation, this is extremely useful, and the equation is “scored” both by its effectiveness at classifying and its simplicity.

But this is exactly analogous to, say, the evolution of a penguin (heh), whose lineage had to “solve” the “problem” of catching fish underwater (because there’s not much food above ground in the Antarctic, as well as the problem of not being eaten by leopard seals. Various lineages found solutions to that problem, all different, but the penguin solution was to become extremely streamlined, optimise its wings, originally optimised for flight in air to “flight” in water, but retain a terrestrial habit so that its forays into fish-filled-but-leapard-seal-infested waters could be as brief as possible and the sheer speed of its underwater flight was sufficient to propel it back out on to the ice at the end of a sortie!

The only difference between the two scenarios is that for the penguin, the “problem” was set by the sheer fact of fish, leapard seal, and no food out of the water: you will breed well if you manage to swim under water, and you will breed even better if you can swim so fast you can propel yourself on to the ice before the leopard seals can get you. Whereas for my evolving equations, the “problem” happens to set by me for my own purposes: you will breed if you classify my patients well, and you will breed even better if you do it simply.

The key difference is simply that as an intelligent external designer I have an external reason for setting the problem, so my problem is set deliberately in order to fulfill a purpose I have that is irrelevant to the lives of my poor equations. And perhaps the Intelligent Designer of Life set ice floes, fish, and leopard seals as a problem for the penguin lineage to solve for ulterior reasons of her own. But like the penguins, my equations don’t care who or what set the problem. All that matters is that if they solve the problem effectively, their offspring, together with the solution, goes on to breed, whereas if they don’t, they are less likely to do so.

“Fitness” is not a criterion, ever, in nature or in a GA. “Fitness” is a measure of how well an individual meets the criterion. This is what is confusing you, but you are not alone. The “criterion” or “criteria” are the resources and threats inherent in the natural environment, or designed by the GA designer. They can be anything, and, as I said, a GA designer can even set them to be randomly generated (for instance, I once wrote one in which the resources of the environment, and the threats, fluctuated, according to a randomising algorithm; as a result, the population kept adapting to the new set of problems thrown up by my script, just as the finches on the Galapagos do.

So, to repeat: the “fitness” of an organism is simply a measure of how well it breeds in the environment it has to breed in. For my penguins, a slow swimmer is less fit than a fast swimmer. For my equations, a complicated equation is less fit than a simple one. The feature common to both is that they breed less well than a faster/simpler organism, in the first case because it is more likely to be eaten by a leopard seal, and in the second because it is more likely to be “eaten” or “starved” in my virtual environment.

So to answer your questions: the sets of characteristics that comprise “fitness” are those that promote the capacity to breed within that environment. Note the important corollary of this is that what a characteristic that promotes breeding in one environment may be a handicap in another. Thus a deep beaked finch may be more fit than a shallower-beaked finch in years in which large seeds are more more numerous than small, but less fit in years in which small seeds are more numerous than large. This is why it is important to understand that fitness is a function of the environment in which the organism has to breed, not an absolute property of the organism in any environment.

I will address your satire from the understanding that you have misunderstood not only the “actual darwinian mindset” but the relationship between GAs and natural evolution as proposed by Darwin:

This is an oxymoron. The true “Darwinist” would say: “I need a GA that culls organisms that do not meet criterion X”, understanding that that means that in such an environment, the “fit” organisms will be those that meet criterion X.

All fine, assuming the “Darwinist” is exceptionally stupid and does not understand GAs.

Well, the Programmer is being rather tactful here. What she (I?) would say, is something like: “don’t be silly, of course the fitness depends on the situation. It’s the situation I need to know if I’m going to write your GA.”

I see the Programmer has only slightly more patience than I do! Still, she’s asked the wrong question. What she needs to know is: Well…err…what problems do you want your organisms to evolve to solve?

To which of course,

Is not an answer, although it is a reasonable answer to the one the Programmer actually asked. Not helpful though. As she rightly responds:

Programmer: “No shit, Sherlock.

or “Geez, ask a silly question….”

Unfortunately, from her next response we realise that she’s not much smarter than the “Darwinist”:

That’s her job to figure out – typically, the starting population will have no better chance at solving the problem than an uninformed guess. But one thing she might want to do is figure out what kind of reproductive system the GA critters should have – typically, “sexual reproduction” is a lot faster. And certainly the type of starting critter will have to be appropriate to the problem – you can’t dump a bunch of penguins into the jungle and expect them to evolve into monkeys – they’ll just go instinct.

The programmer seems to have confused the environment (the problem to be solved) with the organisms that will have to evolve with in it (the solutions). That’s not helped by the fact that the client seems to have no clue what he wants (I’m not going to dignify him with a female pronoun). Typically, you need some kind of starting functional form. For instance, if you want a classifier, you want to start with an equation that outputs a 1 or a 0. But you don’t need to know whether you are classifying brain scans or shares. And if the client really wants a specific problem solved, it might make things quicker if you started with some partial solutions. But in this case the client doesn’t seem concerned with the problem itself, he just seems to want to see a population of organisms become more fit. Just as in nature, all that matters is that the population finds a way of surviving and breeding – doesn’t matter whether it does it by being good at avoiding predation and eating veg or by being a top predator.

Well, quite.

But that’s because your “Darwinist” is an idiot and knows nothing about either GAs or Darwinism.

So I’m afraid he is a Straw Client.

This, frankly, is gobbledy gook. What makes GAs work is that they involve a process by which a population of virtual organisms maximises its ability to breed in the environment presented. You can call the environment “information” if you like, but then, equally, you must accept that the natural environment is also full of “information” about what organisms have to “solve” to survive. Or you could simply say that both virtual and actual environments involve challenges and opportunities that any population must solve to survive.

And it is not at all what you mean by the term “target space”. If you mean “the range of possible solutions” then that is unknown at the start of the run (and probably at the finish too. The only “information” about “target space” that is “provided” is that all “targets” must solve the problem of breeding within that environment. duh. Sure, there is “guidance” in the sense that there must be some kind of gradient to fitness – if only complete solutions have enhanced breeding chances, then no solution will evolve – just as if only a perfect penguin could avoid a leopard seal, no penguin could evolve. But this is the nature of fitness gradients, and it is intrinsic to natural environments and mimicked in GAs.

My equation-evolver has an environment such that an equation that classifies 51% of patients correctly will “breed” better than on that classifies only 50%. If only equations that gave 100% correct answers bred any better than any other, the GA wouldn’t be a GA at all – it would be Blind Search, which is where No Free Lunch comes in, by the way. However, we know, from direct observation, that in nature, fitness gradients are gradual – that small variations can slightly increase fitness.

So again, there is no difference between virtual organisms evolving in a virtual, designed environment and real organisms evolving in a real one. Both are “smooth” fitness landscapes (have gradations of fitness). Nothing is “smuggled in” (pace Dembski) to a GA that is not sitting there in plain sight in the natural environment.

I’m asking you to quote a “Darwinist” who has proposed chance as an explanation for the complexity of life.

keiths,

The problem is that you have not shonw that nature is biased towards white fur polar bears. That is your just-so assumption after you happen to find white-fur polar bears. Until you can tell me what “fit” means in a non-ad-hoc sense, you have no basis for claiming that nature is “biased” towards whatever happens to have survived.

Saying that nature is biased towards whatever happens to have survived because it happens to have survived, then making up a “just so” story about why nature “might have” been biased towards white fur is justifying a tautology with countless ad-hoc rationalizations.

Genetic algorithms do not produce anything of value unless target space information is pre-loaded. You are conflating the results of the program, and an ad-hoc rationalization of those results, with pre-loaded information that allows the GA to produce anything significant in the first place.

What information is pre-loaded into the environment, and into organisms, by which we can judge the outcome? What is the GA of Evolution “trying” to achieve? How do you know that a white fur polar bear is the result of GA, or something that occurred/survived in spite of the GA? Answer: you don’t know; you have no way of knowing; you are arguing a tautology supported by nothing more than post-hoc rationalizations.

The fact is that, under materialism/Darwinism, information meaningful to evolutionary outcomes simply cannot exist, because natural selection cannot be said to even “choose for” survival or procreative success. GA’s choose for a goal; the goal is built in to both the organisms and the environment; in Darwinistic nature, though, there is no goal built in to either nature or the organisms, and certain not coordinated information, such as we find in GAs.

You are like economists on an island, trying to get a can of food open, thinking that “assume a can opener” will get the can open. You are assuming information exists in your system that you have no right to or explanation for.

Yeah, assuming that target-area specific, coordinated information exists in both the environment and in the self-replicating w/variation organisms, natural GA’s would work.

It’s that assumption that’s the problem.

If you are going to use terms like “target space”, please be clear what you mean by them. I am assuming that you mean “the set of all possible solutions to the problem of surviving and breeding within the current environment”.

If so, the “information” that the environment provides is simply the problem itself. Just as the exam question “List the causes of the second world war” provides information about the problem to be solved, and nothing more. It may be that there is vast but finite set of solutions to this problem (some worth more marks than other), but the question gives the examinee no guidance as to how to find one of these out of the even vaster but finite set of all possible answers. All it tells you is what the problem is.

Ditto with the natural environment, and ditto with GAs.

If there is one single “target” (way of increasing reproductive success), or a set of targets all of which confer exactly the same degree of increased reproductive success), then, if the “target space” is small in relation to all possible variants, then you are correct, the “target cannot be acquired”. What makes the target acquirable is a property of the target space itself – many possible variants slightly increase reproductive success, and if partial solutions are similar to better solutions, then the “target space” is graduated, and the best solutions are far more likely to be found.

This is another way of saying, in jargon, that if the “fitness landscape” (not the same as “the environment” but more like your “target space”) is smooth then good targets will tend to be found. If it is extremely rough, then it won’t, and there are even theoretical fitness landscapes in which the “population” is systematically steered away from the good solutions. This is why the No Free Lunch theorems say that averaged over all fitness landscapes search algorithms are no better than blind search.

Clearly, GAs are set up to be smooth. So the question is: can this smoothness only result from intelligence?

The answer is: we don’t know, but what we do know is that the one we actually observe in nature is smooth. This is another way of saying: offspring tend to be very similar to their parents (we wouldn’t call it “self-replication” if organisms spawned organisms totally different from themselves), and that similar organisms tend to have similar reproductive success in the same environment.

And all a GA is doing is simulating such a scenario – virtual organisms spawn offspring similar, though not identical, to themselves, in an environment in which similar organisms have similar reproductive success.

And it works in nature, and it works in GAs.

Nope. At least not in any way in which I can parse it. The “target” is, presumably, an organism that solves the problem extremely well. No GA population starts with an optimised organism (unless by phenomenal good luck). In nature, of course, depending on where you “start” (protobionts? Penguins on a steadily freezing continent? Windblown finches on a new island?) the population starts by being at least minimally successful in the current environment or it wouldn’t be a population of self-replicators at all, and in the case of the penguins and finches, probably pretty well adapted already. But the whole point of the discussion (or of a GA), is to find better solutions, and you don’t, by definition, have those when you start. So again, the only “information” the population has is the problem itself (leopard seals; brains to classify).

It depends how long you want it to take. If you want a flying machine, and already you have a lot of solutions, sure. But if you want a walking machine, as in that lovely example someone posted above, no, you don’t have to start with walkers at all. Obviously the system has to have some potential to solve the problem – no system, natural or man-made, has yet evolved the ability to apparate. That’s probably because it isn’t possible. But it’s pretty remarkable what is.

Your last clause doesn’t make a lot of sense, but what sense it does make is addressed by my stuff about smooth fitness landscapes above. If a fitness landscape is smooth, that is another way of saying that similar variants have similar fitness. If you want to call that “coordination” fine. But frankly, it’s much easier to envisage a natural scenario in which that is the case (tall people run faster from tigers, and even taller people run even faster) than one in which is not (people who are 5’11” run fast from tigers but people who are 6′ fall flat on their faces at the sight of a tiger and people who are 5’10” run towards the tiger).

It doesn’t see to me to require much “intelligence” for the world to have turned out so that similar things have similar properties.

William,

Seriously? You don’t think a white polar bear has a reproductive advantage over a black one? A swift gazelle over a slow one? A streamlined dolphin over a block-shaped one? A saber-toothed tiger over a toothless one?

What a fanciful world you live in.

keiths,

In fairness, I am not aware of any experiments comparing the survival chances of polar and white bears in the Arctic. So WJM gets a point on that.

However, experiments comparing survival rates of peppered moths have been done and they demonstrate a bias imposed by the environment. WJM loses on that.

I see that Lizzie has already responded in detail to your comment, William, but your misunderstanding of genetic algorithms is so profound that I think it might help to make the parallels with observed biological systems explicit.

Most GAs, including my simple implementation, consist of a few core elements: a collection of digital strings, a set of evolutionary mechanisms to apply, and a fitness function.

The digital strings, of course, model a population of a particular species.

The set of evolutionary mechanisms sometimes consist only of a mutation algorithm that operates on the population. Another common mechanism is crossover to simulate sexual reproduction. More complex mechanisms are also used. These model observed biological mechanisms.

The fitness function is typically chosen to assign higher fitness to digital organisms that better meet the criteria the GA is intended to optimize. This may be as specific as a better antenna design or as general as survival in a particular virtual world. The fitness function models the environment in which we observe biological evolution take place.

A GA run typically starts with a randomized population of strings and repeatedly applies the evolutionary mechanisms, selecting the next generation based on the fitness function. This models the heritable variation in reproductive success we observe in biological systems.

Now that you have a better understanding of what a GA actually does and pointers to additional information sufficient to educate yourself further, do you find anything objectionable about GAs?

Snow isn’t white? Camouflage isn’t useful for a predator?

Reflecting heat isn’t useful in a cold environment?ETA brainfartWhy on earth would you think there wasn’t a strong bias to whiteness in arctic animals?

William, do you seriously mean to suggest you cannot see any advantage for polar bears to have the hollow colourless hair that makes them look white against snow and ice?

Some information for you on polar bear fur.

I find the close relatedness of Polar Bears to Ursus arctos (Polar Bear/Grizzly Bear hybrids) fascinating. More info here

ETA changed “reason” to “advantage”

The hikers’ joke:

B: You can’t outrun a bear.

C: I don’t have to outrun the bear, I just have to run faster than you!

Perhaps not for the bear!

I’d suggest the survival advantage was for bears with colourless hair (or the allele for it) in a population of Brown bears. It is supposedly a textbook example of peripatric speciation.

Alan Fox,

It could be, Alan. The advantage of the peppered moth example is that the environmental bias was measured experimentally. The experiments were successfully reproduced recently.

heh. You are right! I have a kind of conceptual dyslexia sometimes!

Yes, of course, the clever thing about polar bear hair is its transparency of course.

And the hollowness! Interestingly hair on the back of Brown Bears exhibits hollowness.

And walrus whiskers actually have a blood supply and nerves!

olegt,

Controlled experiments aren’t the exclusive source of scientific knowledge, of course. Observations also count, including observations of our own successes and failures as predators hunting white animals against a snowy backdrop.

Given what we know about camouflage, both experimentally and through observation, it would be beyond silly to suggest, as William does, that the preponderance of white coats among arctic animals is a mere fluke, conferring no survival advantage.

He goes even further, arguing that selection is tautologous:

William, do you believe that children without congenital heart defects just “happen to survive” better than those with such defects? That it is purely a tautology?

Good grief! GAs, now? Truly, this is an EveryThread.

keiths,

One wonders what the Designer is doing pissing around giving organisms these wonderful ‘design’ features Paleyites revel in, if they don’t actually do anything for survival.

It reminds me of JoeG’s “reason” why natural selection is irrelevant. The fittest organism might have had a rock randomly fall on it, therefore selection does not/cannot work.

The fact that would have to happen every single time seems to have escaped JoeG.

That we observe organisms adapted to their environment is just a fluke, yeah, that must be it.

From your link:

heh.

WJM has given us another wonderful example of an ID-Creationist not knowing a thing about a topic (GAs) yet mindlessly repeating the IDC propaganda he has previously read. AFAIK Dembski was the first to use the ridiculous hand wave “GAs only work because you smuggle in information” meme. Dembski did this because IDC had the embarrassing problem that algorithms based on empirically observed evolutionary processes created both new information and IC structures, something the IDCers claimed was impossible.

I wonder if WJM thinks real world evolution of animals by artificial selection is also “smuggling in information”. Dachshunds for example were specifically bred to have short legs so they could go into badger burrows. How did the dog owners who did the breeding “smuggle in” the information to the dogs’ genome to produce short legs? Any ideas WJM?

I suppose one could argue the random mutation is perpetually creating new information and the environment is selecting the best of it.

This is very true, as can be seen by looking at discussions/definitions at UD regarding GA’s. William has practically copypasted directly from UD.

I guess if you don’t know, learning is not an option for ID supporters. Just repeat someone else’s predigested misconceptions.

Richardthughes,

If evolution is true, that’s a good summary. The dynamic (chaotic) nature of the environment (leopard seals are part of the penguin’s environment and vice versa- both changing over time in an attempt to stay alive) is perhaps not emphasized enough.

Oh lololololololol

That page is a fount of information!

It is the re-hybridisation of Polar and Brown Bears that I think is fascinating, seemingly due to climate change. The resultant hybrids suggesting an echo of what the original transitionals might have looked like and suggesting why they might have preferentially survived at the edge of a new niche.

QFMFT.

People like Billy boyo can tell they’re “design” features, because, look, how obvious it is that the Interior Decorator designed them to give the organism its wonderful appearance — and that’s not a tautology, of course not, because reasons.

“He who has eyes to see, let him see”.

Richard,

Which is why IDers don’t much like Shannon information. Stochastic processes produce heaps of Shannon information, and that makes IDers nervous.

Not to ‘peanut gallery’, but Joe has made the odd claim ” Heck the environment doesn’t even select.” If that were true, men, fish and birds would all be equally as viable underwater, in the air and only on land.

Joe is confused. But I’m genuinely glad to see him about again, and completely back to his old self 🙂 I really had thought that he must be dead.

Lizzie,

Yes, is still ‘never wrong’ but at least he’s given up bullying. No doubt he was away on special ops in Afghanistan. Anyway, welcome back Joe. Why not use your considerable…erm…energy! to create a positive case for ID that doesn’t require criticism of any other theory but stands on its own experimental and observational merit?

Yep. He’s back to being infatuated with you on his blog, calling you everything from willfully ignorant to a clueless freak to a pathetic loser.

How is it you manage to hide those devil horns and cloven hooves in public? 🙂

There’s just as much information in a failed (lethal) gene sequence as in a useful one.

“Well, I don’t think “chance” is anything like specific enough to be a “cause”. You might as well cite “shit happens” as a cause.

The question is “which particular shit?”

But yes, of course, evolution in practice driven by non-deterministic processes, not least because at least some mutations are probably the result of quantum level events (although it also works perfectly well in deterministic simulations). But again, that tells us very little.

I’d say what drives evolution is a chaotic system with “attractor basins” representing locally optimal configurations for reproductive success.

And if that sounds too confusing, I suggest you read something on non-linear systems, because that is what evolution is.”

Do you know this darwinist?

And I also quoted Gould and mentioned Monod, Alan is telling me that I´m here because the by chance mome met dad.

True. Information can also kill.

William tends to quietly disappear when his position becomes indefensible. I hope that doesn’t happen this time.

Keep Richard’s sartorial advice in mind, William.

Lizzie asked you to quote a “Darwinist” who has proposed chance as an explanation and you quote her explicitly stating that “chance” is not specific enough to be a cause. How is this in any way responsive?

Are you confused by the distinction between specific processes that are non-deterministic and the abstract concept of “chance”? Lizzie’s entire original post in this thread explains exactly why “chance” isn’t useful as an explanation, complete with examples. Perhaps you need to re-read it.

Ah, I never thought of the army. Silly me. Well, I really am glad to see him safely home, then.

Thank you for your service, Joe!

Even the top brass occasionally need their toaster ovens repaired. Way to go Joe G!